Compare commits

54 Commits

skip-chang

...

notificati

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

a357c82e6f | ||

|

|

d4559886b1 | ||

|

|

19c96f4bdd | ||

|

|

82b900fbf4 | ||

|

|

358a365303 | ||

|

|

a07ca4b136 | ||

|

|

ba8cf2c8cf | ||

|

|

3106b6688e | ||

|

|

2c83845dac | ||

|

|

111266d6fa | ||

|

|

ead610151f | ||

|

|

7e1e763989 | ||

|

|

327cc4af34 | ||

|

|

6008ff516e | ||

|

|

cdcf4b353f | ||

|

|

1ab70f8e86 | ||

|

|

8227c012a7 | ||

|

|

c113d5fb24 | ||

|

|

8c8d4066d7 | ||

|

|

277dc9e1c1 | ||

|

|

fc0fd1ce9d | ||

|

|

bd6127728a | ||

|

|

4101ae00c6 | ||

|

|

62f14df3cb | ||

|

|

560d465c59 | ||

|

|

7929aeddfc | ||

|

|

8294519f43 | ||

|

|

8ba8a220b6 | ||

|

|

aa3c8a9370 | ||

|

|

dbb5468cdc | ||

|

|

329c7620fb | ||

|

|

1f974bfbb0 | ||

|

|

437c8525af | ||

|

|

a2a1d5ae90 | ||

|

|

2566de2aae | ||

|

|

dfec8dbb39 | ||

|

|

5cefb16e52 | ||

|

|

341ae24b73 | ||

|

|

f47c2fb7f6 | ||

|

|

9d742446ab | ||

|

|

e3e022b0f4 | ||

|

|

6de4027c27 | ||

|

|

cda3837355 | ||

|

|

7983675325 | ||

|

|

eef56e52c6 | ||

|

|

8e3195f394 | ||

|

|

e17c2121f7 | ||

|

|

07e279b38d | ||

|

|

2c834cfe37 | ||

|

|

dbb5c666f0 | ||

|

|

70b3493866 | ||

|

|

3b11c474d1 | ||

|

|

890e1e6dcd | ||

|

|

6734fb91a2 |

11

.github/ISSUE_TEMPLATE/bug_report.md

vendored

@@ -1,9 +1,9 @@

|

||||

---

|

||||

name: Bug report

|

||||

about: Create a report to help us improve

|

||||

about: Create a bug report, if you don't follow this template, your report will be DELETED

|

||||

title: ''

|

||||

labels: ''

|

||||

assignees: ''

|

||||

labels: 'triage'

|

||||

assignees: 'dgtlmoon'

|

||||

|

||||

---

|

||||

|

||||

@@ -11,15 +11,18 @@ assignees: ''

|

||||

A clear and concise description of what the bug is.

|

||||

|

||||

**Version**

|

||||

In the top right area: 0....

|

||||

*Exact version* in the top right area: 0....

|

||||

|

||||

**To Reproduce**

|

||||

|

||||

Steps to reproduce the behavior:

|

||||

1. Go to '...'

|

||||

2. Click on '....'

|

||||

3. Scroll down to '....'

|

||||

4. See error

|

||||

|

||||

! ALWAYS INCLUDE AN EXAMPLE URL WHERE IT IS POSSIBLE TO RE-CREATE THE ISSUE - USE THE 'SHARE WATCH' FEATURE AND PASTE IN THE SHARE-LINK!

|

||||

|

||||

**Expected behavior**

|

||||

A clear and concise description of what you expected to happen.

|

||||

|

||||

|

||||

4

.github/ISSUE_TEMPLATE/feature_request.md

vendored

@@ -1,8 +1,8 @@

|

||||

---

|

||||

name: Feature request

|

||||

about: Suggest an idea for this project

|

||||

title: ''

|

||||

labels: ''

|

||||

title: '[feature]'

|

||||

labels: 'enhancement'

|

||||

assignees: ''

|

||||

|

||||

---

|

||||

|

||||

1

.gitignore

vendored

@@ -8,5 +8,6 @@ __pycache__

|

||||

build

|

||||

dist

|

||||

venv

|

||||

test-datastore

|

||||

*.egg-info*

|

||||

.vscode/settings.json

|

||||

|

||||

@@ -1,3 +1,4 @@

|

||||

recursive-include changedetectionio/api *

|

||||

recursive-include changedetectionio/templates *

|

||||

recursive-include changedetectionio/static *

|

||||

recursive-include changedetectionio/model *

|

||||

|

||||

21

README.md

@@ -12,7 +12,7 @@ Live your data-life *pro-actively* instead of *re-actively*.

|

||||

Free, Open-source web page monitoring, notification and change detection. Don't have time? [**Try our $6.99/month subscription - unlimited checks and watches!**](https://lemonade.changedetection.io/start)

|

||||

|

||||

|

||||

[<img src="https://raw.githubusercontent.com/dgtlmoon/changedetection.io/master/screenshot.png" style="max-width:100%;" alt="Self-hosted web page change monitoring" title="Self-hosted web page change monitoring" />](https://lemonade.changedetection.io/start)

|

||||

[<img src="https://raw.githubusercontent.com/dgtlmoon/changedetection.io/master/docs/screenshot.png" style="max-width:100%;" alt="Self-hosted web page change monitoring" title="Self-hosted web page change monitoring" />](https://lemonade.changedetection.io/start)

|

||||

|

||||

|

||||

**Get your own private instance now! Let us host it for you!**

|

||||

@@ -48,12 +48,19 @@ _Need an actual Chrome runner with Javascript support? We support fetching via W

|

||||

|

||||

## Screenshots

|

||||

|

||||

Examining differences in content.

|

||||

### Examine differences in content.

|

||||

|

||||

<img src="https://raw.githubusercontent.com/dgtlmoon/changedetection.io/master/screenshot-diff.png" style="max-width:100%;" alt="Self-hosted web page change monitoring context difference " title="Self-hosted web page change monitoring context difference " />

|

||||

Easily see what changed, examine by word, line, or individual character.

|

||||

|

||||

<img src="https://raw.githubusercontent.com/dgtlmoon/changedetection.io/master/docs/screenshot-diff.png" style="max-width:100%;" alt="Self-hosted web page change monitoring context difference " title="Self-hosted web page change monitoring context difference " />

|

||||

|

||||

Please :star: star :star: this project and help it grow! https://github.com/dgtlmoon/changedetection.io/

|

||||

|

||||

### Target elements with the Visual Selector tool.

|

||||

|

||||

Available when connected to a <a href="https://github.com/dgtlmoon/changedetection.io/wiki/Playwright-content-fetcher">playwright content fetcher</a> (available also as part of our subscription service)

|

||||

|

||||

<img src="https://raw.githubusercontent.com/dgtlmoon/changedetection.io/master/docs/visualselector-anim.gif" style="max-width:100%;" alt="Self-hosted web page change monitoring context difference " title="Self-hosted web page change monitoring context difference " />

|

||||

|

||||

## Installation

|

||||

|

||||

@@ -129,7 +136,7 @@ Just some examples

|

||||

|

||||

<a href="https://github.com/caronc/apprise#popular-notification-services">And everything else in this list!</a>

|

||||

|

||||

<img src="https://raw.githubusercontent.com/dgtlmoon/changedetection.io/master/screenshot-notifications.png" style="max-width:100%;" alt="Self-hosted web page change monitoring notifications" title="Self-hosted web page change monitoring notifications" />

|

||||

<img src="https://raw.githubusercontent.com/dgtlmoon/changedetection.io/master/docs/screenshot-notifications.png" style="max-width:100%;" alt="Self-hosted web page change monitoring notifications" title="Self-hosted web page change monitoring notifications" />

|

||||

|

||||

Now you can also customise your notification content!

|

||||

|

||||

@@ -137,11 +144,11 @@ Now you can also customise your notification content!

|

||||

|

||||

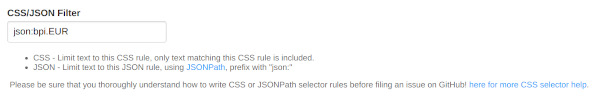

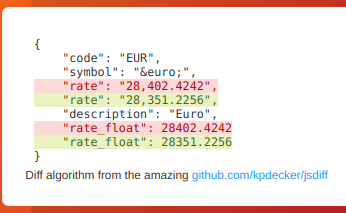

Detect changes and monitor data in JSON API's by using the built-in JSONPath selectors as a filter / selector.

|

||||

|

||||

|

||||

|

||||

|

||||

This will re-parse the JSON and apply formatting to the text, making it super easy to monitor and detect changes in JSON API results

|

||||

|

||||

|

||||

|

||||

|

||||

### Parse JSON embedded in HTML!

|

||||

|

||||

@@ -177,7 +184,7 @@ Or directly donate an amount PayPal [

|

||||

from flask_login import login_required

|

||||

from flask_restful import abort, Api

|

||||

|

||||

from flask_wtf import CSRFProtect

|

||||

|

||||

from changedetectionio import html_tools

|

||||

from changedetectionio.api import api_v1

|

||||

|

||||

__version__ = '0.39.13.1'

|

||||

__version__ = '0.39.15'

|

||||

|

||||

datastore = None

|

||||

|

||||

@@ -78,6 +82,8 @@ csrf.init_app(app)

|

||||

|

||||

notification_debug_log=[]

|

||||

|

||||

watch_api = Api(app, decorators=[csrf.exempt])

|

||||

|

||||

def init_app_secret(datastore_path):

|

||||

secret = ""

|

||||

|

||||

@@ -102,7 +108,7 @@ def _jinja2_filter_datetime(watch_obj, format="%Y-%m-%d %H:%M:%S"):

|

||||

# Worker thread tells us which UUID it is currently processing.

|

||||

for t in running_update_threads:

|

||||

if t.current_uuid == watch_obj['uuid']:

|

||||

return "Checking now.."

|

||||

return '<span class="loader"></span><span> Checking now</span>'

|

||||

|

||||

if watch_obj['last_checked'] == 0:

|

||||

return 'Not yet'

|

||||

@@ -173,12 +179,35 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

global datastore

|

||||

datastore = datastore_o

|

||||

|

||||

# so far just for read-only via tests, but this will be moved eventually to be the main source

|

||||

# (instead of the global var)

|

||||

app.config['DATASTORE']=datastore_o

|

||||

|

||||

#app.config.update(config or {})

|

||||

|

||||

login_manager = flask_login.LoginManager(app)

|

||||

login_manager.login_view = 'login'

|

||||

app.secret_key = init_app_secret(config['datastore_path'])

|

||||

|

||||

|

||||

watch_api.add_resource(api_v1.WatchSingleHistory,

|

||||

'/api/v1/watch/<string:uuid>/history/<string:timestamp>',

|

||||

resource_class_kwargs={'datastore': datastore, 'update_q': update_q})

|

||||

|

||||

watch_api.add_resource(api_v1.WatchHistory,

|

||||

'/api/v1/watch/<string:uuid>/history',

|

||||

resource_class_kwargs={'datastore': datastore})

|

||||

|

||||

watch_api.add_resource(api_v1.CreateWatch, '/api/v1/watch',

|

||||

resource_class_kwargs={'datastore': datastore, 'update_q': update_q})

|

||||

|

||||

watch_api.add_resource(api_v1.Watch, '/api/v1/watch/<string:uuid>',

|

||||

resource_class_kwargs={'datastore': datastore, 'update_q': update_q})

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

# Setup cors headers to allow all domains

|

||||

# https://flask-cors.readthedocs.io/en/latest/

|

||||

# CORS(app)

|

||||

@@ -293,25 +322,19 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

|

||||

for watch in sorted_watches:

|

||||

|

||||

dates = list(watch['history'].keys())

|

||||

dates = list(watch.history.keys())

|

||||

# Re #521 - Don't bother processing this one if theres less than 2 snapshots, means we never had a change detected.

|

||||

if len(dates) < 2:

|

||||

continue

|

||||

|

||||

# Convert to int, sort and back to str again

|

||||

# @todo replace datastore getter that does this automatically

|

||||

dates = [int(i) for i in dates]

|

||||

dates.sort(reverse=True)

|

||||

dates = [str(i) for i in dates]

|

||||

prev_fname = watch['history'][dates[1]]

|

||||

prev_fname = watch.history[dates[-2]]

|

||||

|

||||

if not watch['viewed']:

|

||||

if not watch.viewed:

|

||||

# Re #239 - GUID needs to be individual for each event

|

||||

# @todo In the future make this a configurable link back (see work on BASE_URL https://github.com/dgtlmoon/changedetection.io/pull/228)

|

||||

guid = "{}/{}".format(watch['uuid'], watch['last_changed'])

|

||||

fe = fg.add_entry()

|

||||

|

||||

|

||||

# Include a link to the diff page, they will have to login here to see if password protection is enabled.

|

||||

# Description is the page you watch, link takes you to the diff JS UI page

|

||||

base_url = datastore.data['settings']['application']['base_url']

|

||||

@@ -326,13 +349,14 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

|

||||

watch_title = watch.get('title') if watch.get('title') else watch.get('url')

|

||||

fe.title(title=watch_title)

|

||||

latest_fname = watch['history'][dates[0]]

|

||||

latest_fname = watch.history[dates[-1]]

|

||||

|

||||

html_diff = diff.render_diff(prev_fname, latest_fname, include_equal=False, line_feed_sep="</br>")

|

||||

fe.description(description="<![CDATA[<html><body><h4>{}</h4>{}</body></html>".format(watch_title, html_diff))

|

||||

fe.content(content="<html><body><h4>{}</h4>{}</body></html>".format(watch_title, html_diff),

|

||||

type='CDATA')

|

||||

|

||||

fe.guid(guid, permalink=False)

|

||||

dt = datetime.datetime.fromtimestamp(int(watch['newest_history_key']))

|

||||

dt = datetime.datetime.fromtimestamp(int(watch.newest_history_key))

|

||||

dt = dt.replace(tzinfo=pytz.UTC)

|

||||

fe.pubDate(dt)

|

||||

|

||||

@@ -367,6 +391,8 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

|

||||

if limit_tag != None:

|

||||

# Support for comma separated list of tags.

|

||||

if watch['tag'] is None:

|

||||

continue

|

||||

for tag_in_watch in watch['tag'].split(','):

|

||||

tag_in_watch = tag_in_watch.strip()

|

||||

if tag_in_watch == limit_tag:

|

||||

@@ -389,11 +415,13 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

tags=existing_tags,

|

||||

active_tag=limit_tag,

|

||||

app_rss_token=datastore.data['settings']['application']['rss_access_token'],

|

||||

has_unviewed=datastore.data['has_unviewed'],

|

||||

has_unviewed=datastore.has_unviewed,

|

||||

# Don't link to hosting when we're on the hosting environment

|

||||

hosted_sticky=os.getenv("SALTED_PASS", False) == False,

|

||||

guid=datastore.data['app_guid'],

|

||||

queued_uuids=update_q.queue)

|

||||

|

||||

|

||||

if session.get('share-link'):

|

||||

del(session['share-link'])

|

||||

return output

|

||||

@@ -430,6 +458,19 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

|

||||

return 'OK'

|

||||

|

||||

|

||||

@app.route("/scrub/<string:uuid>", methods=['GET'])

|

||||

@login_required

|

||||

def scrub_watch(uuid):

|

||||

try:

|

||||

datastore.scrub_watch(uuid)

|

||||

except KeyError:

|

||||

flash('Watch not found', 'error')

|

||||

else:

|

||||

flash("Scrubbed watch {}".format(uuid))

|

||||

|

||||

return redirect(url_for('index'))

|

||||

|

||||

@app.route("/scrub", methods=['GET', 'POST'])

|

||||

@login_required

|

||||

def scrub_page():

|

||||

@@ -465,10 +506,10 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

|

||||

# 0 means that theres only one, so that there should be no 'unviewed' history available

|

||||

if newest_history_key == 0:

|

||||

newest_history_key = list(datastore.data['watching'][uuid]['history'].keys())[0]

|

||||

newest_history_key = list(datastore.data['watching'][uuid].history.keys())[0]

|

||||

|

||||

if newest_history_key:

|

||||

with open(datastore.data['watching'][uuid]['history'][newest_history_key],

|

||||

with open(datastore.data['watching'][uuid].history[newest_history_key],

|

||||

encoding='utf-8') as file:

|

||||

raw_content = file.read()

|

||||

|

||||

@@ -520,16 +561,9 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

|

||||

# Defaults for proxy choice

|

||||

if datastore.proxy_list is not None: # When enabled

|

||||

system_proxy = datastore.data['settings']['requests']['proxy']

|

||||

if default['proxy'] is None:

|

||||

default['proxy'] = system_proxy

|

||||

else:

|

||||

# Does the chosen one exist?

|

||||

if not any(default['proxy'] in tup for tup in datastore.proxy_list):

|

||||

default['proxy'] = datastore.proxy_list[0][0]

|

||||

|

||||

# Used by the form handler to keep or remove the proxy settings

|

||||

default['proxy_list'] = datastore.proxy_list

|

||||

# Radio needs '' not None, or incase that the chosen one no longer exists

|

||||

if default['proxy'] is None or not any(default['proxy'] in tup for tup in datastore.proxy_list):

|

||||

default['proxy'] = ''

|

||||

|

||||

# proxy_override set to the json/text list of the items

|

||||

form = forms.watchForm(formdata=request.form if request.method == 'POST' else None,

|

||||

@@ -540,9 +574,7 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

# @todo - Couldn't get setattr() etc dynamic addition working, so remove it instead

|

||||

del form.proxy

|

||||

else:

|

||||

form.proxy.choices = datastore.proxy_list

|

||||

if default['proxy'] is None:

|

||||

form.proxy.default='http://hello'

|

||||

form.proxy.choices = [('', 'Default')] + datastore.proxy_list

|

||||

|

||||

if request.method == 'POST' and form.validate():

|

||||

extra_update_obj = {}

|

||||

@@ -571,14 +603,18 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

|

||||

# Reset the previous_md5 so we process a new snapshot including stripping ignore text.

|

||||

if form_ignore_text:

|

||||

if len(datastore.data['watching'][uuid]['history']):

|

||||

if len(datastore.data['watching'][uuid].history):

|

||||

extra_update_obj['previous_md5'] = get_current_checksum_include_ignore_text(uuid=uuid)

|

||||

|

||||

# Reset the previous_md5 so we process a new snapshot including stripping ignore text.

|

||||

if form.css_filter.data.strip() != datastore.data['watching'][uuid]['css_filter']:

|

||||

if len(datastore.data['watching'][uuid]['history']):

|

||||

if len(datastore.data['watching'][uuid].history):

|

||||

extra_update_obj['previous_md5'] = get_current_checksum_include_ignore_text(uuid=uuid)

|

||||

|

||||

# Be sure proxy value is None

|

||||

if datastore.proxy_list is not None and form.data['proxy'] == '':

|

||||

extra_update_obj['proxy'] = None

|

||||

|

||||

datastore.data['watching'][uuid].update(form.data)

|

||||

datastore.data['watching'][uuid].update(extra_update_obj)

|

||||

|

||||

@@ -605,6 +641,12 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

if request.method == 'POST' and not form.validate():

|

||||

flash("An error occurred, please see below.", "error")

|

||||

|

||||

visualselector_data_is_ready = datastore.visualselector_data_is_ready(uuid)

|

||||

|

||||

# Only works reliably with Playwright

|

||||

visualselector_enabled = os.getenv('PLAYWRIGHT_DRIVER_URL', False) and default['fetch_backend'] == 'html_webdriver'

|

||||

|

||||

|

||||

output = render_template("edit.html",

|

||||

uuid=uuid,

|

||||

watch=datastore.data['watching'][uuid],

|

||||

@@ -612,7 +654,9 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

has_empty_checktime=using_default_check_time,

|

||||

using_global_webdriver_wait=default['webdriver_delay'] is None,

|

||||

current_base_url=datastore.data['settings']['application']['base_url'],

|

||||

emailprefix=os.getenv('NOTIFICATION_MAIL_BUTTON_PREFIX', False)

|

||||

emailprefix=os.getenv('NOTIFICATION_MAIL_BUTTON_PREFIX', False),

|

||||

visualselector_data_is_ready=visualselector_data_is_ready,

|

||||

visualselector_enabled=visualselector_enabled

|

||||

)

|

||||

|

||||

return output

|

||||

@@ -676,6 +720,7 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

form=form,

|

||||

current_base_url = datastore.data['settings']['application']['base_url'],

|

||||

hide_remove_pass=os.getenv("SALTED_PASS", False),

|

||||

api_key=datastore.data['settings']['application'].get('api_access_token'),

|

||||

emailprefix=os.getenv('NOTIFICATION_MAIL_BUTTON_PREFIX', False))

|

||||

|

||||

return output

|

||||

@@ -718,15 +763,14 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

return output

|

||||

|

||||

# Clear all statuses, so we do not see the 'unviewed' class

|

||||

@app.route("/api/mark-all-viewed", methods=['GET'])

|

||||

@app.route("/form/mark-all-viewed", methods=['GET'])

|

||||

@login_required

|

||||

def mark_all_viewed():

|

||||

|

||||

# Save the current newest history as the most recently viewed

|

||||

for watch_uuid, watch in datastore.data['watching'].items():

|

||||

datastore.set_last_viewed(watch_uuid, watch['newest_history_key'])

|

||||

datastore.set_last_viewed(watch_uuid, int(time.time()))

|

||||

|

||||

flash("Cleared all statuses.")

|

||||

return redirect(url_for('index'))

|

||||

|

||||

@app.route("/diff/<string:uuid>", methods=['GET'])

|

||||

@@ -744,20 +788,17 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

flash("No history found for the specified link, bad link?", "error")

|

||||

return redirect(url_for('index'))

|

||||

|

||||

dates = list(watch['history'].keys())

|

||||

# Convert to int, sort and back to str again

|

||||

# @todo replace datastore getter that does this automatically

|

||||

dates = [int(i) for i in dates]

|

||||

dates.sort(reverse=True)

|

||||

dates = [str(i) for i in dates]

|

||||

history = watch.history

|

||||

dates = list(history.keys())

|

||||

|

||||

if len(dates) < 2:

|

||||

flash("Not enough saved change detection snapshots to produce a report.", "error")

|

||||

return redirect(url_for('index'))

|

||||

|

||||

# Save the current newest history as the most recently viewed

|

||||

datastore.set_last_viewed(uuid, dates[0])

|

||||

newest_file = watch['history'][dates[0]]

|

||||

datastore.set_last_viewed(uuid, time.time())

|

||||

|

||||

newest_file = history[dates[-1]]

|

||||

|

||||

try:

|

||||

with open(newest_file, 'r') as f:

|

||||

@@ -767,10 +808,10 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

|

||||

previous_version = request.args.get('previous_version')

|

||||

try:

|

||||

previous_file = watch['history'][previous_version]

|

||||

previous_file = history[previous_version]

|

||||

except KeyError:

|

||||

# Not present, use a default value, the second one in the sorted list.

|

||||

previous_file = watch['history'][dates[1]]

|

||||

previous_file = history[dates[-2]]

|

||||

|

||||

try:

|

||||

with open(previous_file, 'r') as f:

|

||||

@@ -781,18 +822,25 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

|

||||

screenshot_url = datastore.get_screenshot(uuid)

|

||||

|

||||

output = render_template("diff.html", watch_a=watch,

|

||||

system_uses_webdriver = datastore.data['settings']['application']['fetch_backend'] == 'html_webdriver'

|

||||

|

||||

is_html_webdriver = True if watch.get('fetch_backend') == 'html_webdriver' or (

|

||||

watch.get('fetch_backend', None) is None and system_uses_webdriver) else False

|

||||

|

||||

output = render_template("diff.html",

|

||||

watch_a=watch,

|

||||

newest=newest_version_file_contents,

|

||||

previous=previous_version_file_contents,

|

||||

extra_stylesheets=extra_stylesheets,

|

||||

versions=dates[1:],

|

||||

uuid=uuid,

|

||||

newest_version_timestamp=dates[0],

|

||||

newest_version_timestamp=dates[-1],

|

||||

current_previous_version=str(previous_version),

|

||||

current_diff_url=watch['url'],

|

||||

extra_title=" - Diff - {}".format(watch['title'] if watch['title'] else watch['url']),

|

||||

left_sticky=True,

|

||||

screenshot=screenshot_url)

|

||||

screenshot=screenshot_url,

|

||||

is_html_webdriver=is_html_webdriver)

|

||||

|

||||

return output

|

||||

|

||||

@@ -807,6 +855,12 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

if uuid == 'first':

|

||||

uuid = list(datastore.data['watching'].keys()).pop()

|

||||

|

||||

# Normally you would never reach this, because the 'preview' button is not available when there's no history

|

||||

# However they may try to scrub and reload the page

|

||||

if datastore.data['watching'][uuid].history_n == 0:

|

||||

flash("Preview unavailable - No fetch/check completed or triggers not reached", "error")

|

||||

return redirect(url_for('index'))

|

||||

|

||||

extra_stylesheets = [url_for('static_content', group='styles', filename='diff.css')]

|

||||

|

||||

try:

|

||||

@@ -815,9 +869,9 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

flash("No history found for the specified link, bad link?", "error")

|

||||

return redirect(url_for('index'))

|

||||

|

||||

if len(watch['history']):

|

||||

timestamps = sorted(watch['history'].keys(), key=lambda x: int(x))

|

||||

filename = watch['history'][timestamps[-1]]

|

||||

if watch.history_n >0:

|

||||

timestamps = sorted(watch.history.keys(), key=lambda x: int(x))

|

||||

filename = watch.history[timestamps[-1]]

|

||||

try:

|

||||

with open(filename, 'r') as f:

|

||||

tmp = f.readlines()

|

||||

@@ -853,6 +907,11 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

content.append({'line': "No history found", 'classes': ''})

|

||||

|

||||

screenshot_url = datastore.get_screenshot(uuid)

|

||||

system_uses_webdriver = datastore.data['settings']['application']['fetch_backend'] == 'html_webdriver'

|

||||

|

||||

is_html_webdriver = True if watch.get('fetch_backend') == 'html_webdriver' or (

|

||||

watch.get('fetch_backend', None) is None and system_uses_webdriver) else False

|

||||

|

||||

output = render_template("preview.html",

|

||||

content=content,

|

||||

extra_stylesheets=extra_stylesheets,

|

||||

@@ -861,8 +920,9 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

current_diff_url=watch['url'],

|

||||

screenshot=screenshot_url,

|

||||

watch=watch,

|

||||

uuid=uuid)

|

||||

|

||||

uuid=uuid,

|

||||

is_html_webdriver=is_html_webdriver)

|

||||

|

||||

return output

|

||||

|

||||

@app.route("/settings/notification-logs", methods=['GET'])

|

||||

@@ -870,31 +930,10 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

def notification_logs():

|

||||

global notification_debug_log

|

||||

output = render_template("notification-log.html",

|

||||

logs=notification_debug_log if len(notification_debug_log) else ["No errors or warnings detected"])

|

||||

logs=notification_debug_log if len(notification_debug_log) else ["Notification logs are empty - no notifications sent yet."])

|

||||

|

||||

return output

|

||||

|

||||

@app.route("/api/<string:uuid>/snapshot/current", methods=['GET'])

|

||||

@login_required

|

||||

def api_snapshot(uuid):

|

||||

|

||||

# More for testing, possible to return the first/only

|

||||

if uuid == 'first':

|

||||

uuid = list(datastore.data['watching'].keys()).pop()

|

||||

|

||||

try:

|

||||

watch = datastore.data['watching'][uuid]

|

||||

except KeyError:

|

||||

return abort(400, "No history found for the specified link, bad link?")

|

||||

|

||||

newest = list(watch['history'].keys())[-1]

|

||||

with open(watch['history'][newest], 'r') as f:

|

||||

content = f.read()

|

||||

|

||||

resp = make_response(content)

|

||||

resp.headers['Content-Type'] = 'text/plain'

|

||||

return resp

|

||||

|

||||

@app.route("/favicon.ico", methods=['GET'])

|

||||

def favicon():

|

||||

return send_from_directory("static/images", path="favicon.ico")

|

||||

@@ -975,10 +1014,9 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

|

||||

@app.route("/static/<string:group>/<string:filename>", methods=['GET'])

|

||||

def static_content(group, filename):

|

||||

from flask import make_response

|

||||

|

||||

if group == 'screenshot':

|

||||

|

||||

from flask import make_response

|

||||

|

||||

# Could be sensitive, follow password requirements

|

||||

if datastore.data['settings']['application']['password'] and not flask_login.current_user.is_authenticated:

|

||||

abort(403)

|

||||

@@ -997,6 +1035,26 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

except FileNotFoundError:

|

||||

abort(404)

|

||||

|

||||

|

||||

if group == 'visual_selector_data':

|

||||

# Could be sensitive, follow password requirements

|

||||

if datastore.data['settings']['application']['password'] and not flask_login.current_user.is_authenticated:

|

||||

abort(403)

|

||||

|

||||

# These files should be in our subdirectory

|

||||

try:

|

||||

# set nocache, set content-type

|

||||

watch_dir = datastore_o.datastore_path + "/" + filename

|

||||

response = make_response(send_from_directory(filename="elements.json", directory=watch_dir, path=watch_dir + "/elements.json"))

|

||||

response.headers['Content-type'] = 'application/json'

|

||||

response.headers['Cache-Control'] = 'no-cache, no-store, must-revalidate'

|

||||

response.headers['Pragma'] = 'no-cache'

|

||||

response.headers['Expires'] = 0

|

||||

return response

|

||||

|

||||

except FileNotFoundError:

|

||||

abort(404)

|

||||

|

||||

# These files should be in our subdirectory

|

||||

try:

|

||||

return send_from_directory("static/{}".format(group), path=filename)

|

||||

@@ -1005,7 +1063,7 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

|

||||

@app.route("/api/add", methods=['POST'])

|

||||

@login_required

|

||||

def api_watch_add():

|

||||

def form_watch_add():

|

||||

from changedetectionio import forms

|

||||

form = forms.quickWatchForm(request.form)

|

||||

|

||||

@@ -1031,7 +1089,7 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

|

||||

@app.route("/api/delete", methods=['GET'])

|

||||

@login_required

|

||||

def api_delete():

|

||||

def form_delete():

|

||||

uuid = request.args.get('uuid')

|

||||

|

||||

if uuid != 'all' and not uuid in datastore.data['watching'].keys():

|

||||

@@ -1048,7 +1106,7 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

|

||||

@app.route("/api/clone", methods=['GET'])

|

||||

@login_required

|

||||

def api_clone():

|

||||

def form_clone():

|

||||

uuid = request.args.get('uuid')

|

||||

# More for testing, possible to return the first/only

|

||||

if uuid == 'first':

|

||||

@@ -1062,7 +1120,7 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

|

||||

@app.route("/api/checknow", methods=['GET'])

|

||||

@login_required

|

||||

def api_watch_checknow():

|

||||

def form_watch_checknow():

|

||||

|

||||

tag = request.args.get('tag')

|

||||

uuid = request.args.get('uuid')

|

||||

@@ -1099,7 +1157,7 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

|

||||

@app.route("/api/share-url", methods=['GET'])

|

||||

@login_required

|

||||

def api_share_put_watch():

|

||||

def form_share_put_watch():

|

||||

"""Given a watch UUID, upload the info and return a share-link

|

||||

the share-link can be imported/added"""

|

||||

import requests

|

||||

@@ -1113,6 +1171,7 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

|

||||

# copy it to memory as trim off what we dont need (history)

|

||||

watch = deepcopy(datastore.data['watching'][uuid])

|

||||

# For older versions that are not a @property

|

||||

if (watch.get('history')):

|

||||

del (watch['history'])

|

||||

|

||||

@@ -1142,14 +1201,14 @@ def changedetection_app(config=None, datastore_o=None):

|

||||

|

||||

|

||||

except Exception as e:

|

||||

flash("Could not share, something went wrong while communicating with the share server.", 'error')

|

||||

logging.error("Error sharing -{}".format(str(e)))

|

||||

flash("Could not share, something went wrong while communicating with the share server - {}".format(str(e)), 'error')

|

||||

|

||||

# https://changedetection.io/share/VrMv05wpXyQa

|

||||

# in the browser - should give you a nice info page - wtf

|

||||

# paste in etc

|

||||

return redirect(url_for('index'))

|

||||

|

||||

|

||||

# @todo handle ctrl break

|

||||

ticker_thread = threading.Thread(target=ticker_thread_check_time_launch_checks).start()

|

||||

|

||||

@@ -1191,6 +1250,9 @@ def check_for_new_version():

|

||||

|

||||

def notification_runner():

|

||||

global notification_debug_log

|

||||

from datetime import datetime

|

||||

import json

|

||||

|

||||

while not app.config.exit.is_set():

|

||||

try:

|

||||

# At the moment only one thread runs (single runner)

|

||||

@@ -1199,13 +1261,16 @@ def notification_runner():

|

||||

time.sleep(1)

|

||||

|

||||

else:

|

||||

# Process notifications

|

||||

|

||||

now = datetime.now()

|

||||

|

||||

try:

|

||||

from changedetectionio import notification

|

||||

notification.process_notification(n_object, datastore)

|

||||

|

||||

sent_obj = notification.process_notification(n_object, datastore)

|

||||

|

||||

except Exception as e:

|

||||

print("Watch URL: {} Error {}".format(n_object['watch_url'], str(e)))

|

||||

logging.error("Watch URL: {} Error {}".format(n_object['watch_url'], str(e)))

|

||||

|

||||

# UUID wont be present when we submit a 'test' from the global settings

|

||||

if 'uuid' in n_object:

|

||||

@@ -1215,14 +1280,19 @@ def notification_runner():

|

||||

log_lines = str(e).splitlines()

|

||||

notification_debug_log += log_lines

|

||||

|

||||

# Trim the log length

|

||||

notification_debug_log = notification_debug_log[-100:]

|

||||

|

||||

# Process notifications

|

||||

notification_debug_log+= ["{} - SENDING - {}".format(now.strftime("%Y/%m/%d %H:%M:%S,000"), json.dumps(sent_obj))]

|

||||

# Trim the log length

|

||||

notification_debug_log = notification_debug_log[-100:]

|

||||

|

||||

# Thread runner to check every minute, look for new watches to feed into the Queue.

|

||||

def ticker_thread_check_time_launch_checks():

|

||||

import random

|

||||

from changedetectionio import update_worker

|

||||

|

||||

recheck_time_minimum_seconds = int(os.getenv('MINIMUM_SECONDS_RECHECK_TIME', 20))

|

||||

print("System env MINIMUM_SECONDS_RECHECK_TIME", recheck_time_minimum_seconds)

|

||||

|

||||

# Spin up Workers that do the fetching

|

||||

# Can be overriden by ENV or use the default settings

|

||||

n_workers = int(os.getenv("FETCH_WORKERS", datastore.data['settings']['requests']['workers']))

|

||||

@@ -1240,9 +1310,10 @@ def ticker_thread_check_time_launch_checks():

|

||||

running_uuids.append(t.current_uuid)

|

||||

|

||||

# Re #232 - Deepcopy the data incase it changes while we're iterating through it all

|

||||

watch_uuid_list = []

|

||||

while True:

|

||||

try:

|

||||

copied_datastore = deepcopy(datastore)

|

||||

watch_uuid_list = datastore.data['watching'].keys()

|

||||

except RuntimeError as e:

|

||||

# RuntimeError: dictionary changed size during iteration

|

||||

time.sleep(0.1)

|

||||

@@ -1253,33 +1324,49 @@ def ticker_thread_check_time_launch_checks():

|

||||

while update_q.qsize() >= 2000:

|

||||

time.sleep(1)

|

||||

|

||||

|

||||

recheck_time_system_seconds = int(datastore.threshold_seconds)

|

||||

|

||||

# Check for watches outside of the time threshold to put in the thread queue.

|

||||

now = time.time()

|

||||

|

||||

recheck_time_minimum_seconds = int(os.getenv('MINIMUM_SECONDS_RECHECK_TIME', 60))

|

||||

recheck_time_system_seconds = datastore.threshold_seconds

|

||||

|

||||

for uuid, watch in copied_datastore.data['watching'].items():

|

||||

for uuid in watch_uuid_list:

|

||||

now = time.time()

|

||||

watch = datastore.data['watching'].get(uuid)

|

||||

if not watch:

|

||||

logging.error("Watch: {} no longer present.".format(uuid))

|

||||

continue

|

||||

|

||||

# No need todo further processing if it's paused

|

||||

if watch['paused']:

|

||||

continue

|

||||

|

||||

# If they supplied an individual entry minutes to threshold.

|

||||

threshold = now

|

||||

watch_threshold_seconds = watch.threshold_seconds()

|

||||

if watch_threshold_seconds:

|

||||

threshold -= watch_threshold_seconds

|

||||

else:

|

||||

threshold -= recheck_time_system_seconds

|

||||

|

||||

# Yeah, put it in the queue, it's more than time

|

||||

if watch['last_checked'] <= max(threshold, recheck_time_minimum_seconds):

|

||||

watch_threshold_seconds = watch.threshold_seconds()

|

||||

threshold = watch_threshold_seconds if watch_threshold_seconds > 0 else recheck_time_system_seconds

|

||||

|

||||

# #580 - Jitter plus/minus amount of time to make the check seem more random to the server

|

||||

jitter = datastore.data['settings']['requests'].get('jitter_seconds', 0)

|

||||

if jitter > 0:

|

||||

if watch.jitter_seconds == 0:

|

||||

watch.jitter_seconds = random.uniform(-abs(jitter), jitter)

|

||||

|

||||

|

||||

seconds_since_last_recheck = now - watch['last_checked']

|

||||

if seconds_since_last_recheck >= (threshold + watch.jitter_seconds) and seconds_since_last_recheck >= recheck_time_minimum_seconds:

|

||||

if not uuid in running_uuids and uuid not in update_q.queue:

|

||||

print("Queued watch UUID {} last checked at {} queued at {:0.2f} jitter {:0.2f}s, {:0.2f}s since last checked".format(uuid,

|

||||

watch['last_checked'],

|

||||

now,

|

||||

watch.jitter_seconds,

|

||||

now - watch['last_checked']))

|

||||

# Into the queue with you

|

||||

update_q.put(uuid)

|

||||

|

||||

# Wait a few seconds before checking the list again

|

||||

time.sleep(3)

|

||||

# Reset for next time

|

||||

watch.jitter_seconds = 0

|

||||

|

||||

# Wait before checking the list again - saves CPU

|

||||

time.sleep(1)

|

||||

|

||||

# Should be low so we can break this out in testing

|

||||

app.config.exit.wait(1)

|

||||

0

changedetectionio/api/__init__.py

Normal file

124

changedetectionio/api/api_v1.py

Normal file

@@ -0,0 +1,124 @@

|

||||

from flask_restful import abort, Resource

|

||||

from flask import request, make_response

|

||||

import validators

|

||||

from . import auth

|

||||

|

||||

|

||||

|

||||

# https://www.w3.org/Protocols/rfc2616/rfc2616-sec10.html

|

||||

|

||||

class Watch(Resource):

|

||||

def __init__(self, **kwargs):

|

||||

# datastore is a black box dependency

|

||||

self.datastore = kwargs['datastore']

|

||||

self.update_q = kwargs['update_q']

|

||||

|

||||

# Get information about a single watch, excluding the history list (can be large)

|

||||

# curl http://localhost:4000/api/v1/watch/<string:uuid>

|

||||

# ?recheck=true

|

||||

@auth.check_token

|

||||

def get(self, uuid):

|

||||

from copy import deepcopy

|

||||

watch = deepcopy(self.datastore.data['watching'].get(uuid))

|

||||

if not watch:

|

||||

abort(404, message='No watch exists with the UUID of {}'.format(uuid))

|

||||

|

||||

if request.args.get('recheck'):

|

||||

self.update_q.put(uuid)

|

||||

return "OK", 200

|

||||

|

||||

# Return without history, get that via another API call

|

||||

watch['history_n'] = watch.history_n

|

||||

return watch

|

||||

|

||||

@auth.check_token

|

||||

def delete(self, uuid):

|

||||

if not self.datastore.data['watching'].get(uuid):

|

||||

abort(400, message='No watch exists with the UUID of {}'.format(uuid))

|

||||

|

||||

self.datastore.delete(uuid)

|

||||

return 'OK', 204

|

||||

|

||||

|

||||

class WatchHistory(Resource):

|

||||

def __init__(self, **kwargs):

|

||||

# datastore is a black box dependency

|

||||

self.datastore = kwargs['datastore']

|

||||

|

||||

# Get a list of available history for a watch by UUID

|

||||

# curl http://localhost:4000/api/v1/watch/<string:uuid>/history

|

||||

def get(self, uuid):

|

||||

watch = self.datastore.data['watching'].get(uuid)

|

||||

if not watch:

|

||||

abort(404, message='No watch exists with the UUID of {}'.format(uuid))

|

||||

return watch.history, 200

|

||||

|

||||

|

||||

class WatchSingleHistory(Resource):

|

||||

def __init__(self, **kwargs):

|

||||

# datastore is a black box dependency

|

||||

self.datastore = kwargs['datastore']

|

||||

|

||||

# Read a given history snapshot and return its content

|

||||

# <string:timestamp> or "latest"

|

||||

# curl http://localhost:4000/api/v1/watch/<string:uuid>/history/<int:timestamp>

|

||||

@auth.check_token

|

||||

def get(self, uuid, timestamp):

|

||||

watch = self.datastore.data['watching'].get(uuid)

|

||||

if not watch:

|

||||

abort(404, message='No watch exists with the UUID of {}'.format(uuid))

|

||||

|

||||

if not len(watch.history):

|

||||

abort(404, message='Watch found but no history exists for the UUID {}'.format(uuid))

|

||||

|

||||

if timestamp == 'latest':

|

||||

timestamp = list(watch.history.keys())[-1]

|

||||

|

||||

with open(watch.history[timestamp], 'r') as f:

|

||||

content = f.read()

|

||||

|

||||

response = make_response(content, 200)

|

||||

response.mimetype = "text/plain"

|

||||

return response

|

||||

|

||||

|

||||

class CreateWatch(Resource):

|

||||

def __init__(self, **kwargs):

|

||||

# datastore is a black box dependency

|

||||

self.datastore = kwargs['datastore']

|

||||

self.update_q = kwargs['update_q']

|

||||

|

||||

@auth.check_token

|

||||

def post(self):

|

||||

# curl http://localhost:4000/api/v1/watch -H "Content-Type: application/json" -d '{"url": "https://my-nice.com", "tag": "one, two" }'

|

||||

json_data = request.get_json()

|

||||

tag = json_data['tag'].strip() if json_data.get('tag') else ''

|

||||

|

||||

if not validators.url(json_data['url'].strip()):

|

||||

return "Invalid or unsupported URL", 400

|

||||

|

||||

extras = {'title': json_data['title'].strip()} if json_data.get('title') else {}

|

||||

|

||||

new_uuid = self.datastore.add_watch(url=json_data['url'].strip(), tag=tag, extras=extras)

|

||||

self.update_q.put(new_uuid)

|

||||

return {'uuid': new_uuid}, 201

|

||||

|

||||

# Return concise list of available watches and some very basic info

|

||||

# curl http://localhost:4000/api/v1/watch|python -mjson.tool

|

||||

# ?recheck_all=1 to recheck all

|

||||

@auth.check_token

|

||||

def get(self):

|

||||

list = {}

|

||||

for k, v in self.datastore.data['watching'].items():

|

||||

list[k] = {'url': v['url'],

|

||||

'title': v['title'],

|

||||

'last_checked': v['last_checked'],

|

||||

'last_changed': v['last_changed'],

|

||||

'last_error': v['last_error']}

|

||||

|

||||

if request.args.get('recheck_all'):

|

||||

for uuid in self.datastore.data['watching'].keys():

|

||||

self.update_q.put(uuid)

|

||||

return {'status': "OK"}, 200

|

||||

|

||||

return list, 200

|

||||

33

changedetectionio/api/auth.py

Normal file

@@ -0,0 +1,33 @@

|

||||

from flask import request, make_response, jsonify

|

||||

from functools import wraps

|

||||

|

||||

|

||||

# Simple API auth key comparison

|

||||

# @todo - Maybe short lived token in the future?

|

||||

|

||||

def check_token(f):

|

||||

@wraps(f)

|

||||

def decorated(*args, **kwargs):

|

||||

datastore = args[0].datastore

|

||||

|

||||

config_api_token_enabled = datastore.data['settings']['application'].get('api_access_token_enabled')

|

||||

if not config_api_token_enabled:

|

||||

return

|

||||

|

||||

try:

|

||||

api_key_header = request.headers['x-api-key']

|

||||

except KeyError:

|

||||

return make_response(

|

||||

jsonify("No authorization x-api-key header."), 403

|

||||

)

|

||||

|

||||

config_api_token = datastore.data['settings']['application'].get('api_access_token')

|

||||

|

||||

if api_key_header != config_api_token:

|

||||

return make_response(

|

||||

jsonify("Invalid access - API key invalid."), 403

|

||||

)

|

||||

|

||||

return f(*args, **kwargs)

|

||||

|

||||

return decorated

|

||||

@@ -1,11 +1,18 @@

|

||||

from abc import ABC, abstractmethod

|

||||

import chardet

|

||||

import json

|

||||

import os

|

||||

import requests

|

||||

import time

|

||||

import urllib3.exceptions

|

||||

import sys

|

||||

|

||||

class PageUnloadable(Exception):

|

||||

def __init__(self, status_code, url):

|

||||

# Set this so we can use it in other parts of the app

|

||||

self.status_code = status_code

|

||||

self.url = url

|

||||

return

|

||||

pass

|

||||

|

||||

class EmptyReply(Exception):

|

||||

def __init__(self, status_code, url):

|

||||

@@ -13,7 +20,22 @@ class EmptyReply(Exception):

|

||||

self.status_code = status_code

|

||||

self.url = url

|

||||

return

|

||||

pass

|

||||

|

||||

class ScreenshotUnavailable(Exception):

|

||||

def __init__(self, status_code, url):

|

||||

# Set this so we can use it in other parts of the app

|

||||

self.status_code = status_code

|

||||

self.url = url

|

||||

return

|

||||

pass

|

||||

|

||||

class ReplyWithContentButNoText(Exception):

|

||||

def __init__(self, status_code, url):

|

||||

# Set this so we can use it in other parts of the app

|

||||

self.status_code = status_code

|

||||

self.url = url

|

||||

return

|

||||

pass

|

||||

|

||||

|

||||

@@ -22,6 +44,135 @@ class Fetcher():

|

||||

status_code = None

|

||||

content = None

|

||||

headers = None

|

||||

|

||||

fetcher_description = "No description"

|

||||

xpath_element_js = """

|

||||

// Include the getXpath script directly, easier than fetching

|

||||

!function(e,n){"object"==typeof exports&&"undefined"!=typeof module?module.exports=n():"function"==typeof define&&define.amd?define(n):(e=e||self).getXPath=n()}(this,function(){return function(e){var n=e;if(n&&n.id)return'//*[@id="'+n.id+'"]';for(var o=[];n&&Node.ELEMENT_NODE===n.nodeType;){for(var i=0,r=!1,d=n.previousSibling;d;)d.nodeType!==Node.DOCUMENT_TYPE_NODE&&d.nodeName===n.nodeName&&i++,d=d.previousSibling;for(d=n.nextSibling;d;){if(d.nodeName===n.nodeName){r=!0;break}d=d.nextSibling}o.push((n.prefix?n.prefix+":":"")+n.localName+(i||r?"["+(i+1)+"]":"")),n=n.parentNode}return o.length?"/"+o.reverse().join("/"):""}});

|

||||

|

||||

|

||||

const findUpTag = (el) => {

|

||||

let r = el

|

||||

chained_css = [];

|

||||

depth=0;

|

||||

|

||||

// Strategy 1: Keep going up until we hit an ID tag, imagine it's like #list-widget div h4

|

||||

while (r.parentNode) {

|

||||

if(depth==5) {

|

||||

break;

|

||||

}

|

||||

if('' !==r.id) {

|

||||

chained_css.unshift("#"+r.id);

|

||||

final_selector= chained_css.join('>');

|

||||

// Be sure theres only one, some sites have multiples of the same ID tag :-(

|

||||

if (window.document.querySelectorAll(final_selector).length ==1 ) {

|

||||

return final_selector;

|

||||

}

|

||||

return null;

|

||||

} else {

|

||||

chained_css.unshift(r.tagName.toLowerCase());

|

||||

}

|

||||

r=r.parentNode;

|

||||

depth+=1;

|

||||

}

|

||||

return null;

|

||||

}

|

||||

|

||||

|

||||

// @todo - if it's SVG or IMG, go into image diff mode

|

||||

var elements = window.document.querySelectorAll("div,span,form,table,tbody,tr,td,a,p,ul,li,h1,h2,h3,h4, header, footer, section, article, aside, details, main, nav, section, summary");

|

||||

var size_pos=[];

|

||||

// after page fetch, inject this JS

|

||||

// build a map of all elements and their positions (maybe that only include text?)

|

||||

var bbox;

|

||||

for (var i = 0; i < elements.length; i++) {

|

||||

bbox = elements[i].getBoundingClientRect();

|

||||

|

||||

// forget really small ones

|

||||

if (bbox['width'] <20 && bbox['height'] < 20 ) {

|

||||

continue;

|

||||

}

|

||||

|

||||

// @todo the getXpath kind of sucks, it doesnt know when there is for example just one ID sometimes

|

||||

// it should not traverse when we know we can anchor off just an ID one level up etc..

|

||||

// maybe, get current class or id, keep traversing up looking for only class or id until there is just one match

|

||||

|

||||

// 1st primitive - if it has class, try joining it all and select, if theres only one.. well thats us.

|

||||

xpath_result=false;

|

||||

|

||||

try {

|

||||

var d= findUpTag(elements[i]);

|

||||

if (d) {

|

||||

xpath_result =d;

|

||||

}

|

||||

} catch (e) {

|

||||

console.log(e);

|

||||

}

|

||||

|

||||

// You could swap it and default to getXpath and then try the smarter one

|

||||

// default back to the less intelligent one

|

||||

if (!xpath_result) {

|

||||

try {

|

||||

// I've seen on FB and eBay that this doesnt work

|

||||

// ReferenceError: getXPath is not defined at eval (eval at evaluate (:152:29), <anonymous>:67:20) at UtilityScript.evaluate (<anonymous>:159:18) at UtilityScript.<anonymous> (<anonymous>:1:44)

|

||||

xpath_result = getXPath(elements[i]);

|

||||

} catch (e) {

|

||||

console.log(e);

|

||||

continue;

|

||||

}

|

||||

}

|

||||

|

||||

if(window.getComputedStyle(elements[i]).visibility === "hidden") {

|

||||

continue;

|

||||

}

|

||||

|

||||

size_pos.push({

|

||||

xpath: xpath_result,

|

||||

width: Math.round(bbox['width']),

|

||||

height: Math.round(bbox['height']),

|

||||

left: Math.floor(bbox['left']),

|

||||

top: Math.floor(bbox['top']),

|

||||

childCount: elements[i].childElementCount

|

||||

});

|

||||

}

|

||||

|

||||

|

||||

// inject the current one set in the css_filter, which may be a CSS rule

|

||||

// used for displaying the current one in VisualSelector, where its not one we generated.

|

||||

if (css_filter.length) {

|

||||

q=false;

|

||||

try {

|

||||

// is it xpath?

|

||||

if (css_filter.startsWith('/') || css_filter.startsWith('xpath:')) {

|

||||

q=document.evaluate(css_filter.replace('xpath:',''), document, null, XPathResult.FIRST_ORDERED_NODE_TYPE, null).singleNodeValue;

|

||||

} else {

|

||||

q=document.querySelector(css_filter);

|

||||

}

|

||||

} catch (e) {

|

||||

// Maybe catch DOMException and alert?

|

||||

console.log(e);

|

||||

}

|

||||

bbox=false;

|

||||

if(q) {

|

||||

bbox = q.getBoundingClientRect();

|

||||

}

|

||||

|

||||

if (bbox && bbox['width'] >0 && bbox['height']>0) {

|

||||

size_pos.push({

|

||||

xpath: css_filter,

|

||||

width: bbox['width'],

|

||||

height: bbox['height'],

|

||||

left: bbox['left'],

|

||||

top: bbox['top'],

|

||||

childCount: q.childElementCount

|

||||

});

|

||||

}

|

||||

}

|

||||

// Window.width required for proper scaling in the frontend

|

||||

return {'size_pos':size_pos, 'browser_width': window.innerWidth};

|

||||

"""

|

||||

xpath_data = None

|

||||

|

||||

# Will be needed in the future by the VisualSelector, always get this where possible.

|

||||

screenshot = False

|

||||

fetcher_description = "No description"

|

||||

@@ -42,7 +193,8 @@ class Fetcher():

|

||||

request_headers,

|

||||

request_body,

|

||||

request_method,

|

||||

ignore_status_codes=False):

|

||||

ignore_status_codes=False,

|

||||

current_css_filter=None):

|

||||

# Should set self.error, self.status_code and self.content

|

||||

pass

|

||||

|

||||

@@ -123,52 +275,95 @@ class base_html_playwright(Fetcher):

|

||||

request_headers,

|

||||

request_body,

|

||||

request_method,

|

||||

ignore_status_codes=False):

|

||||

ignore_status_codes=False,

|

||||

current_css_filter=None):

|

||||

|

||||

from playwright.sync_api import sync_playwright

|

||||

import playwright._impl._api_types

|

||||

from playwright._impl._api_types import Error, TimeoutError

|

||||

|

||||

response = None

|

||||

with sync_playwright() as p:

|

||||

browser_type = getattr(p, self.browser_type)

|

||||

|

||||

# Seemed to cause a connection Exception even tho I can see it connect

|

||||

# self.browser = browser_type.connect(self.command_executor, timeout=timeout*1000)

|

||||

browser = browser_type.connect_over_cdp(self.command_executor, timeout=timeout * 1000)

|

||||

# 60,000 connection timeout only

|

||||

browser = browser_type.connect_over_cdp(self.command_executor, timeout=60000)

|

||||

|

||||

# Set user agent to prevent Cloudflare from blocking the browser

|

||||

# Use the default one configured in the App.py model that's passed from fetch_site_status.py

|

||||

context = browser.new_context(

|

||||

user_agent=request_headers['User-Agent'] if request_headers.get('User-Agent') else 'Mozilla/5.0',

|

||||

proxy=self.proxy

|

||||

proxy=self.proxy,

|

||||

# This is needed to enable JavaScript execution on GitHub and others

|

||||

bypass_csp=True,

|

||||

# Should never be needed

|

||||

accept_downloads=False

|

||||

)

|

||||

|

||||

page = context.new_page()

|

||||

page.set_viewport_size({"width": 1280, "height": 1024})

|

||||

try:

|

||||

response = page.goto(url, timeout=timeout * 1000, wait_until='commit')

|

||||

# Wait_until = commit

|

||||

# - `'commit'` - consider operation to be finished when network response is received and the document started loading.

|

||||

# Better to not use any smarts from Playwright and just wait an arbitrary number of seconds

|

||||

# This seemed to solve nearly all 'TimeoutErrors'

|

||||

extra_wait = int(os.getenv("WEBDRIVER_DELAY_BEFORE_CONTENT_READY", 5)) + self.render_extract_delay

|

||||

page.wait_for_timeout(extra_wait * 1000)

|

||||

page.set_default_navigation_timeout(90000)

|

||||

page.set_default_timeout(90000)

|

||||

|

||||

# Bug - never set viewport size BEFORE page.goto

|

||||

|

||||

# Waits for the next navigation. Using Python context manager

|

||||

# prevents a race condition between clicking and waiting for a navigation.

|

||||

with page.expect_navigation():

|

||||

response = page.goto(url, wait_until='load')

|

||||

|

||||

except playwright._impl._api_types.TimeoutError as e:

|

||||

raise EmptyReply(url=url, status_code=None)

|

||||

context.close()

|

||||

browser.close()

|

||||

# This can be ok, we will try to grab what we could retrieve

|

||||

pass

|

||||

except Exception as e:

|

||||

print ("other exception when page.goto")

|

||||

print (str(e))

|

||||

context.close()

|

||||

browser.close()

|

||||

raise PageUnloadable(url=url, status_code=None)

|

||||

|

||||

if response is None:

|

||||

context.close()

|

||||

browser.close()

|

||||

print ("response object was none")

|

||||

raise EmptyReply(url=url, status_code=None)

|

||||

|

||||

if len(page.content().strip()) == 0:

|

||||

raise EmptyReply(url=url, status_code=None)

|

||||

|

||||

self.status_code = response.status

|

||||

# Bug 2(?) Set the viewport size AFTER loading the page

|

||||

page.set_viewport_size({"width": 1280, "height": 1024})

|

||||

extra_wait = int(os.getenv("WEBDRIVER_DELAY_BEFORE_CONTENT_READY", 5)) + self.render_extract_delay

|

||||

time.sleep(extra_wait)

|

||||

self.content = page.content()

|

||||

self.status_code = response.status

|

||||

|

||||

if len(self.content.strip()) == 0:

|

||||

context.close()

|

||||

browser.close()

|

||||

print ("Content was empty")

|

||||

raise EmptyReply(url=url, status_code=None)

|

||||

|

||||

self.headers = response.all_headers()

|

||||

|

||||

if current_css_filter is not None:

|

||||

page.evaluate("var css_filter={}".format(json.dumps(current_css_filter)))

|

||||

else:

|

||||

page.evaluate("var css_filter=''")

|

||||

|

||||

self.xpath_data = page.evaluate("async () => {" + self.xpath_element_js + "}")

|

||||

|

||||

# Bug 3 in Playwright screenshot handling

|

||||

# Some bug where it gives the wrong screenshot size, but making a request with the clip set first seems to solve it

|

||||

# JPEG is better here because the screenshots can be very very large

|

||||

page.screenshot(type='jpeg', clip={'x': 1.0, 'y': 1.0, 'width': 1280, 'height': 1024})

|

||||

self.screenshot = page.screenshot(type='jpeg', full_page=True, quality=90)

|

||||

try:

|

||||

page.screenshot(type='jpeg', clip={'x': 1.0, 'y': 1.0, 'width': 1280, 'height': 1024})

|

||||

self.screenshot = page.screenshot(type='jpeg', full_page=True, quality=92)

|

||||

except Exception as e:

|

||||

context.close()

|

||||

browser.close()

|

||||

raise ScreenshotUnavailable(url=url, status_code=None)

|

||||

|

||||

context.close()

|

||||

browser.close()

|

||||

|

||||

@@ -220,7 +415,8 @@ class base_html_webdriver(Fetcher):

|

||||

request_headers,

|

||||

request_body,

|

||||

request_method,

|

||||

ignore_status_codes=False):

|

||||

ignore_status_codes=False,

|

||||

current_css_filter=None):

|

||||

|

||||

from selenium import webdriver

|

||||

from selenium.webdriver.common.desired_capabilities import DesiredCapabilities

|

||||

@@ -240,6 +436,10 @@ class base_html_webdriver(Fetcher):

|

||||

self.quit()

|

||||

raise

|

||||

|

||||

self.driver.set_window_size(1280, 1024)

|

||||

self.driver.implicitly_wait(int(os.getenv("WEBDRIVER_DELAY_BEFORE_CONTENT_READY", 5)))

|

||||

self.screenshot = self.driver.get_screenshot_as_png()

|

||||

|

||||

# @todo - how to check this? is it possible?

|

||||

self.status_code = 200

|

||||

# @todo somehow we should try to get this working for WebDriver

|

||||

@@ -249,8 +449,6 @@ class base_html_webdriver(Fetcher):

|

||||

time.sleep(int(os.getenv("WEBDRIVER_DELAY_BEFORE_CONTENT_READY", 5)) + self.render_extract_delay)

|

||||

self.content = self.driver.page_source

|

||||

self.headers = {}

|

||||

self.screenshot = self.driver.get_screenshot_as_png()

|

||||

self.quit()

|

||||

|

||||

# Does the connection to the webdriver work? run a test connection.

|

||||

def is_ready(self):

|

||||

@@ -287,7 +485,8 @@ class html_requests(Fetcher):

|

||||

request_headers,

|

||||

request_body,

|

||||

request_method,

|

||||

ignore_status_codes=False):

|

||||

ignore_status_codes=False,

|

||||

current_css_filter=None):

|

||||

|

||||

proxies={}

|

||||

|

||||

|

||||

@@ -94,6 +94,7 @@ class perform_site_check():

|

||||

# If the klass doesnt exist, just use a default

|

||||

klass = getattr(content_fetcher, "html_requests")

|

||||

|

||||

|

||||

proxy_args = self.set_proxy_from_list(watch)

|

||||

fetcher = klass(proxy_override=proxy_args)

|

||||

|

||||

@@ -104,7 +105,8 @@ class perform_site_check():

|

||||

elif system_webdriver_delay is not None:

|

||||

fetcher.render_extract_delay = system_webdriver_delay

|

||||

|

||||

fetcher.run(url, timeout, request_headers, request_body, request_method, ignore_status_code)

|

||||