Compare commits

391 Commits

0.38.1

...

improve-no

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

df2c58d1ff | ||

|

|

d29e0eea47 | ||

|

|

7e1e763989 | ||

|

|

327cc4af34 | ||

|

|

6008ff516e | ||

|

|

cdcf4b353f | ||

|

|

1ab70f8e86 | ||

|

|

8227c012a7 | ||

|

|

c113d5fb24 | ||

|

|

8c8d4066d7 | ||

|

|

277dc9e1c1 | ||

|

|

fc0fd1ce9d | ||

|

|

bd6127728a | ||

|

|

4101ae00c6 | ||

|

|

62f14df3cb | ||

|

|

560d465c59 | ||

|

|

7929aeddfc | ||

|

|

8294519f43 | ||

|

|

8ba8a220b6 | ||

|

|

aa3c8a9370 | ||

|

|

dbb5468cdc | ||

|

|

329c7620fb | ||

|

|

1f974bfbb0 | ||

|

|

437c8525af | ||

|

|

a2a1d5ae90 | ||

|

|

2566de2aae | ||

|

|

dfec8dbb39 | ||

|

|

5cefb16e52 | ||

|

|

341ae24b73 | ||

|

|

f47c2fb7f6 | ||

|

|

9d742446ab | ||

|

|

e3e022b0f4 | ||

|

|

6de4027c27 | ||

|

|

cda3837355 | ||

|

|

7983675325 | ||

|

|

eef56e52c6 | ||

|

|

8e3195f394 | ||

|

|

e17c2121f7 | ||

|

|

07e279b38d | ||

|

|

2c834cfe37 | ||

|

|

dbb5c666f0 | ||

|

|

70b3493866 | ||

|

|

3b11c474d1 | ||

|

|

890e1e6dcd | ||

|

|

6734fb91a2 | ||

|

|

16809b48f8 | ||

|

|

67c833d2bc | ||

|

|

31fea55ee4 | ||

|

|

b6c50d3b1a | ||

|

|

034507f14f | ||

|

|

0e385b1c22 | ||

|

|

f28c260576 | ||

|

|

18f0b63b7d | ||

|

|

97045e7a7b | ||

|

|

9807cf0cda | ||

|

|

d4b5237103 | ||

|

|

dc6f76ba64 | ||

|

|

1f2f93184e | ||

|

|

0f08c8dda3 | ||

|

|

68db20168e | ||

|

|

1d4474f5a3 | ||

|

|

613308881c | ||

|

|

f69585b276 | ||

|

|

0179940df1 | ||

|

|

c0d0424e7e | ||

|

|

014dc61222 | ||

|

|

06517bfd22 | ||

|

|

b3a115dd4a | ||

|

|

ffc4215411 | ||

|

|

9e708810d1 | ||

|

|

1e8aa6158b | ||

|

|

015353eccc | ||

|

|

501183e66b | ||

|

|

def74f27e6 | ||

|

|

37775a46c6 | ||

|

|

e4eaa0c817 | ||

|

|

206ded4201 | ||

|

|

9e71f2aa35 | ||

|

|

f9594aeffb | ||

|

|

b4e1353376 | ||

|

|

5b670c38d3 | ||

|

|

2a9fb12451 | ||

|

|

6c3c5dc28a | ||

|

|

8f062bfec9 | ||

|

|

380c512cc2 | ||

|

|

d7ed7c44ed | ||

|

|

34a87c0f41 | ||

|

|

4074fe53f1 | ||

|

|

44d599d0d1 | ||

|

|

615fe9290a | ||

|

|

2cc6955bc3 | ||

|

|

9809af142d | ||

|

|

1890881977 | ||

|

|

9fc2fe85d5 | ||

|

|

bb3c546838 | ||

|

|

165f794595 | ||

|

|

a440eece9e | ||

|

|

34c83f0e7c | ||

|

|

f6e518497a | ||

|

|

63e91a3d66 | ||

|

|

3034d047c2 | ||

|

|

2620818ba7 | ||

|

|

9fe4f95990 | ||

|

|

ffd2a89d60 | ||

|

|

8f40f19328 | ||

|

|

082634f851 | ||

|

|

334010025f | ||

|

|

81aa8fa16b | ||

|

|

c79d6824e3 | ||

|

|

946377d2be | ||

|

|

5db9a30ad4 | ||

|

|

1d060225e1 | ||

|

|

7e0f0d0fd8 | ||

|

|

8b2afa2220 | ||

|

|

f55ffa0f62 | ||

|

|

942c3f021f | ||

|

|

5483f5d694 | ||

|

|

f2fa638480 | ||

|

|

82d1a7f73e | ||

|

|

9fc291fb63 | ||

|

|

3e8a15456a | ||

|

|

2a03f3f57e | ||

|

|

ffad5cca97 | ||

|

|

60a9a786e0 | ||

|

|

165e950e55 | ||

|

|

c25294ca57 | ||

|

|

d4359c2e67 | ||

|

|

44fc804991 | ||

|

|

b72c9eaf62 | ||

|

|

7ce9e4dfc2 | ||

|

|

3cc6586695 | ||

|

|

09204cb43f | ||

|

|

a709122874 | ||

|

|

efbeaf9535 | ||

|

|

1a19fba07d | ||

|

|

eb9020c175 | ||

|

|

13bb44e4f8 | ||

|

|

47f294c23b | ||

|

|

a4cce16188 | ||

|

|

69aec23d1d | ||

|

|

f85ccffe0a | ||

|

|

0005131472 | ||

|

|

3be1f4ea44 | ||

|

|

46c72a7fb3 | ||

|

|

96664ffb10 | ||

|

|

615fa2c5b2 | ||

|

|

fd45fcce2f | ||

|

|

75ca7ec504 | ||

|

|

8b1e9f6591 | ||

|

|

883aa968fd | ||

|

|

3240ed2339 | ||

|

|

a89ffffc76 | ||

|

|

fda93c3798 | ||

|

|

a51c555964 | ||

|

|

b401998030 | ||

|

|

014fda9058 | ||

|

|

dd384619e0 | ||

|

|

85715120e2 | ||

|

|

a0e4f9b88a | ||

|

|

04bef6091e | ||

|

|

536948c8c6 | ||

|

|

d4f4ab306a | ||

|

|

8d2e240a2a | ||

|

|

d7ed479ca2 | ||

|

|

f25cdf0a67 | ||

|

|

5214a7e0f3 | ||

|

|

eb3dca3805 | ||

|

|

a580c238b6 | ||

|

|

7ca89f5ec3 | ||

|

|

8ab8aaa6ae | ||

|

|

22ef9afb93 | ||

|

|

abaec224f6 | ||

|

|

5a645fb74d | ||

|

|

14db60e518 | ||

|

|

e250c552d0 | ||

|

|

8e54a17e14 | ||

|

|

8607eccaad | ||

|

|

17511d0d7d | ||

|

|

41b806228c | ||

|

|

453cf81e1d | ||

|

|

0095b28ea3 | ||

|

|

73101a47e7 | ||

|

|

03f776ca45 | ||

|

|

39b7be9e7a | ||

|

|

6611823962 | ||

|

|

c1c453e4fe | ||

|

|

4887180671 | ||

|

|

ac7378b7fb | ||

|

|

eeba8c864d | ||

|

|

abe88192f4 | ||

|

|

af8efbb6d2 | ||

|

|

bbc2875ef3 | ||

|

|

b7ca10ebac | ||

|

|

a896493797 | ||

|

|

e5fe095f16 | ||

|

|

271181968f | ||

|

|

8206383ee5 | ||

|

|

ecfc02ba23 | ||

|

|

3331ccd061 | ||

|

|

bd8f389a65 | ||

|

|

bc74227635 | ||

|

|

07c60a6acc | ||

|

|

7916faf58b | ||

|

|

febb2bbf0d | ||

|

|

59d31bf76f | ||

|

|

f87f7077a6 | ||

|

|

f166ab1e30 | ||

|

|

55e679e973 | ||

|

|

e211ba806f | ||

|

|

b33105d576 | ||

|

|

b73f5a5c88 | ||

|

|

023951a10e | ||

|

|

fbd9ecab62 | ||

|

|

b5c1fce136 | ||

|

|

489671dcca | ||

|

|

d4dc3466dc | ||

|

|

0439acacbe | ||

|

|

735fc2ac8e | ||

|

|

8a825f0055 | ||

|

|

d0ae8b7923 | ||

|

|

a504773941 | ||

|

|

feb8e6c76c | ||

|

|

a37a5038d8 | ||

|

|

f1933b786c | ||

|

|

d6a6ef2c1d | ||

|

|

cf9554b169 | ||

|

|

d602cf4646 | ||

|

|

dfcae4ee64 | ||

|

|

e3bcd8c9bf | ||

|

|

c4990fa3f9 | ||

|

|

98461d813e | ||

|

|

8ec17a4c83 | ||

|

|

ee708cc395 | ||

|

|

8a670c029a | ||

|

|

9fa5aec01e | ||

|

|

43c9cb8b0c | ||

|

|

b6a359d55b | ||

|

|

ae5a88beea | ||

|

|

a899d338e9 | ||

|

|

7975e8ec2e | ||

|

|

ce383bcd04 | ||

|

|

0b0cdb101b | ||

|

|

396509bae8 | ||

|

|

2973f40035 | ||

|

|

067fac862c | ||

|

|

20647ea319 | ||

|

|

fafc7fda62 | ||

|

|

b1aaf9f277 | ||

|

|

18987aeb23 | ||

|

|

856789a9ba | ||

|

|

2857c7bb77 | ||

|

|

df951637c4 | ||

|

|

ba6fe076bb | ||

|

|

9815fc2526 | ||

|

|

e71dbbe771 | ||

|

|

bd222c99c6 | ||

|

|

4b002ad9e0 | ||

|

|

fe2ffd6356 | ||

|

|

266bebb5bc | ||

|

|

115ff5bc2e | ||

|

|

dd6a24d337 | ||

|

|

f0d418d58c | ||

|

|

10d3b09051 | ||

|

|

35d0c74454 | ||

|

|

dd450b81ad | ||

|

|

512d76c52b | ||

|

|

5a10acfd09 | ||

|

|

a7c09c8990 | ||

|

|

9235eae608 | ||

|

|

5bbd82be79 | ||

|

|

7f8c0fb2fa | ||

|

|

489eedf34e | ||

|

|

3956b3fd68 | ||

|

|

61c1d213d0 | ||

|

|

e07f573f64 | ||

|

|

ecba130fdb | ||

|

|

ff6dc842c0 | ||

|

|

4659993ecf | ||

|

|

0a29b3a582 | ||

|

|

c55bf418c5 | ||

|

|

4bbb7d99b6 | ||

|

|

a8e92e2226 | ||

|

|

c17327633f | ||

|

|

56d1dde7c3 | ||

|

|

6e4ddacaf8 | ||

|

|

3195ffa1c6 | ||

|

|

c749d2ee44 | ||

|

|

ec94359f3c | ||

|

|

4d0bd58eb1 | ||

|

|

3525f43469 | ||

|

|

d70252c1eb | ||

|

|

b57b94c63a | ||

|

|

9e914c140e | ||

|

|

5d5ceb2f52 | ||

|

|

bc0303c5da | ||

|

|

1240da4a6e | ||

|

|

4267bda853 | ||

|

|

db1ff1843c | ||

|

|

fe3c20b618 | ||

|

|

2fa93cba3a | ||

|

|

254fbd5a47 | ||

|

|

18f2318572 | ||

|

|

84417fc2b1 | ||

|

|

7f7fc737b3 | ||

|

|

2dc43bdfd3 | ||

|

|

95e39aa727 | ||

|

|

2c71f577e0 | ||

|

|

f987d32c72 | ||

|

|

cd7df86f54 | ||

|

|

cb8fa2583a | ||

|

|

3d3e5db81c | ||

|

|

c9860dc55e | ||

|

|

dbd5cf117a | ||

|

|

e805d6ebe3 | ||

|

|

01f469d91d | ||

|

|

e91cab0c6d | ||

|

|

106c3269a6 | ||

|

|

1628602860 | ||

|

|

ca0ab50c5e | ||

|

|

df0b7bb0fe | ||

|

|

fe59ac4986 | ||

|

|

25476bfcb2 | ||

|

|

6901fc493d | ||

|

|

c40417ff96 | ||

|

|

fd2d938528 | ||

|

|

cd20dea590 | ||

|

|

f921e98265 | ||

|

|

c0e905265c | ||

|

|

5e6a923c35 | ||

|

|

7618081e83 | ||

|

|

b903280cd0 | ||

|

|

5b60314e8b | ||

|

|

dfd34d2a5b | ||

|

|

98f6f0c80d | ||

|

|

8c65c60c27 | ||

|

|

bd0d9048e7 | ||

|

|

3b14be4fef | ||

|

|

05f7e123ed | ||

|

|

54d80ddea0 | ||

|

|

b9e0ad052f | ||

|

|

f8937e437a | ||

|

|

fbe9270528 | ||

|

|

58c3bc371d | ||

|

|

4683b0d120 | ||

|

|

5fb9bbdfa3 | ||

|

|

5883e5b920 | ||

|

|

b99957f54a | ||

|

|

21cb7fbca9 | ||

|

|

4ed5d4c2e7 | ||

|

|

8c3163f459 | ||

|

|

a11b6daa2e | ||

|

|

642ad5660d | ||

|

|

252d6ee6fd | ||

|

|

ba7b6b0f8b | ||

|

|

f2094a3010 | ||

|

|

b9ed7e2d20 | ||

|

|

6d3962acb6 | ||

|

|

32a0d38025 | ||

|

|

df08d51d2a | ||

|

|

d87c643e58 | ||

|

|

9e08f326be | ||

|

|

1f821d6e8b | ||

|

|

00fe4d4e41 | ||

|

|

f88561e713 | ||

|

|

dd193ffcec | ||

|

|

1e39a1b745 | ||

|

|

1084603375 | ||

|

|

3f9d949534 | ||

|

|

684deaed35 | ||

|

|

1b931fef20 | ||

|

|

d1976db149 | ||

|

|

a8fb17df9a | ||

|

|

8f28c80ef5 | ||

|

|

5a2c534fde | ||

|

|

e2304b2ce0 | ||

|

|

b87236ea20 | ||

|

|

dfbc9bfc53 | ||

|

|

f3ba051df4 | ||

|

|

affe39ff98 | ||

|

|

0f5d5e6caf | ||

|

|

2a66ac1db0 | ||

|

|

07308eedbd | ||

|

|

750b882546 | ||

|

|

1c09407e24 | ||

|

|

7e87591ae5 | ||

|

|

9e6c2bf3e0 | ||

|

|

c396cf8176 | ||

|

|

b19a037fac | ||

|

|

5cd4a36896 | ||

|

|

aec3531127 | ||

|

|

78434114be |

44

.github/ISSUE_TEMPLATE/bug_report.md

vendored

Normal file

@@ -0,0 +1,44 @@

|

||||

---

|

||||

name: Bug report

|

||||

about: Create a bug report, if you don't follow this template, your report will be DELETED

|

||||

title: ''

|

||||

labels: 'triage'

|

||||

assignees: 'dgtlmoon'

|

||||

|

||||

---

|

||||

|

||||

**Describe the bug**

|

||||

A clear and concise description of what the bug is.

|

||||

|

||||

**Version**

|

||||

*Exact version* in the top right area: 0....

|

||||

|

||||

**To Reproduce**

|

||||

|

||||

Steps to reproduce the behavior:

|

||||

1. Go to '...'

|

||||

2. Click on '....'

|

||||

3. Scroll down to '....'

|

||||

4. See error

|

||||

|

||||

! ALWAYS INCLUDE AN EXAMPLE URL WHERE IT IS POSSIBLE TO RE-CREATE THE ISSUE - USE THE 'SHARE WATCH' FEATURE AND PASTE IN THE SHARE-LINK!

|

||||

|

||||

**Expected behavior**

|

||||

A clear and concise description of what you expected to happen.

|

||||

|

||||

**Screenshots**

|

||||

If applicable, add screenshots to help explain your problem.

|

||||

|

||||

**Desktop (please complete the following information):**

|

||||

- OS: [e.g. iOS]

|

||||

- Browser [e.g. chrome, safari]

|

||||

- Version [e.g. 22]

|

||||

|

||||

**Smartphone (please complete the following information):**

|

||||

- Device: [e.g. iPhone6]

|

||||

- OS: [e.g. iOS8.1]

|

||||

- Browser [e.g. stock browser, safari]

|

||||

- Version [e.g. 22]

|

||||

|

||||

**Additional context**

|

||||

Add any other context about the problem here.

|

||||

23

.github/ISSUE_TEMPLATE/feature_request.md

vendored

Normal file

@@ -0,0 +1,23 @@

|

||||

---

|

||||

name: Feature request

|

||||

about: Suggest an idea for this project

|

||||

title: '[feature]'

|

||||

labels: 'enhancement'

|

||||

assignees: ''

|

||||

|

||||

---

|

||||

**Version and OS**

|

||||

For example, 0.123 on linux/docker

|

||||

|

||||

**Is your feature request related to a problem? Please describe.**

|

||||

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

|

||||

|

||||

**Describe the solution you'd like**

|

||||

A clear and concise description of what you want to happen.

|

||||

|

||||

**Describe the use-case and give concrete real-world examples**

|

||||

Attach any HTML/JSON, give links to sites, screenshots etc, we are not mind readers

|

||||

|

||||

|

||||

**Additional context**

|

||||

Add any other context or screenshots about the feature request here.

|

||||

129

.github/workflows/containers.yml

vendored

Normal file

@@ -0,0 +1,129 @@

|

||||

name: Build and push containers

|

||||

|

||||

on:

|

||||

# Automatically triggered by a testing workflow passing, but this is only checked when it lands in the `master`/default branch

|

||||

# workflow_run:

|

||||

# workflows: ["ChangeDetection.io Test"]

|

||||

# branches: [master]

|

||||

# tags: ['0.*']

|

||||

# types: [completed]

|

||||

|

||||

# Or a new tagged release

|

||||

release:

|

||||

types: [published, edited]

|

||||

|

||||

push:

|

||||

branches:

|

||||

- master

|

||||

|

||||

jobs:

|

||||

metadata:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Show metadata

|

||||

run: |

|

||||

echo SHA ${{ github.sha }}

|

||||

echo github.ref: ${{ github.ref }}

|

||||

echo github_ref: $GITHUB_REF

|

||||

echo Event name: ${{ github.event_name }}

|

||||

echo Ref ${{ github.ref }}

|

||||

echo c: ${{ github.event.workflow_run.conclusion }}

|

||||

echo r: ${{ github.event.workflow_run }}

|

||||

echo tname: "${{ github.event.release.tag_name }}"

|

||||

echo headbranch: -${{ github.event.workflow_run.head_branch }}-

|

||||

set

|

||||

|

||||

build-push-containers:

|

||||

runs-on: ubuntu-latest

|

||||

# If the testing workflow has a success, then we build to :latest

|

||||

# Or if we are in a tagged release scenario.

|

||||

if: ${{ github.event.workflow_run.conclusion == 'success' }} || ${{ github.event.release.tag_name }} != ''

|

||||

steps:

|

||||

- uses: actions/checkout@v2

|

||||

- name: Set up Python 3.9

|

||||

uses: actions/setup-python@v2

|

||||

with:

|

||||

python-version: 3.9

|

||||

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

python -m pip install --upgrade pip

|

||||

pip install flake8 pytest

|

||||

if [ -f requirements.txt ]; then pip install -r requirements.txt; fi

|

||||

if [ -f requirements-dev.txt ]; then pip install -r requirements-dev.txt; fi

|

||||

|

||||

- name: Create release metadata

|

||||

run: |

|

||||

# COPY'ed by Dockerfile into changedetectionio/ of the image, then read by the server in store.py

|

||||

echo ${{ github.sha }} > changedetectionio/source.txt

|

||||

echo ${{ github.ref }} > changedetectionio/tag.txt

|

||||

|

||||

- name: Set up QEMU

|

||||

uses: docker/setup-qemu-action@v1

|

||||

with:

|

||||

image: tonistiigi/binfmt:latest

|

||||

platforms: all

|

||||

|

||||

- name: Login to GitHub Container Registry

|

||||

uses: docker/login-action@v1

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: ${{ github.actor }}

|

||||

password: ${{ secrets.GITHUB_TOKEN }}

|

||||

|

||||

- name: Login to Docker Hub Container Registry

|

||||

uses: docker/login-action@v1

|

||||

with:

|

||||

username: ${{ secrets.DOCKER_HUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKER_HUB_ACCESS_TOKEN }}

|

||||

|

||||

- name: Set up Docker Buildx

|

||||

id: buildx

|

||||

uses: docker/setup-buildx-action@v1

|

||||

with:

|

||||

install: true

|

||||

version: latest

|

||||

driver-opts: image=moby/buildkit:master

|

||||

|

||||

# master always builds :latest

|

||||

- name: Build and push :latest

|

||||

id: docker_build

|

||||

if: ${{ github.ref }} == "refs/heads/master"

|

||||

uses: docker/build-push-action@v2

|

||||

with:

|

||||

context: ./

|

||||

file: ./Dockerfile

|

||||

push: true

|

||||

tags: |

|

||||

${{ secrets.DOCKER_HUB_USERNAME }}/changedetection.io:latest,ghcr.io/${{ github.repository }}:latest

|

||||

platforms: linux/amd64,linux/arm64,linux/arm/v6,linux/arm/v7

|

||||

cache-from: type=local,src=/tmp/.buildx-cache

|

||||

cache-to: type=local,dest=/tmp/.buildx-cache

|

||||

|

||||

# A new tagged release is required, which builds :tag

|

||||

- name: Build and push :tag

|

||||

id: docker_build_tag_release

|

||||

if: github.event_name == 'release' && startsWith(github.event.release.tag_name, '0.')

|

||||

uses: docker/build-push-action@v2

|

||||

with:

|

||||

context: ./

|

||||

file: ./Dockerfile

|

||||

push: true

|

||||

tags: |

|

||||

${{ secrets.DOCKER_HUB_USERNAME }}/changedetection.io:${{ github.event.release.tag_name }},ghcr.io/dgtlmoon/changedetection.io:${{ github.event.release.tag_name }}

|

||||

platforms: linux/amd64,linux/arm64,linux/arm/v6,linux/arm/v7

|

||||

cache-from: type=local,src=/tmp/.buildx-cache

|

||||

cache-to: type=local,dest=/tmp/.buildx-cache

|

||||

|

||||

- name: Image digest

|

||||

run: echo step SHA ${{ steps.vars.outputs.sha_short }} tag ${{steps.vars.outputs.tag}} branch ${{steps.vars.outputs.branch}} digest ${{ steps.docker_build.outputs.digest }}

|

||||

|

||||

- name: Cache Docker layers

|

||||

uses: actions/cache@v2

|

||||

with:

|

||||

path: /tmp/.buildx-cache

|

||||

key: ${{ runner.os }}-buildx-${{ github.sha }}

|

||||

restore-keys: |

|

||||

${{ runner.os }}-buildx-

|

||||

|

||||

|

||||

91

.github/workflows/image-tag.yml

vendored

@@ -1,91 +0,0 @@

|

||||

name: Test, build and push tagged release to Docker Hub

|

||||

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- '*.*'

|

||||

|

||||

jobs:

|

||||

build:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

|

||||

- uses: actions/checkout@v2

|

||||

- name: Set up Python 3.9

|

||||

uses: actions/setup-python@v2

|

||||

with:

|

||||

python-version: 3.9

|

||||

|

||||

- uses: olegtarasov/get-tag@v2.1

|

||||

id: tagName

|

||||

|

||||

# with:

|

||||

# tagRegex: "foobar-(.*)" # Optional. Returns specified group text as tag name. Full tag string is returned if regex is not defined.

|

||||

# tagRegexGroup: 1 # Optional. Default is 1.

|

||||

|

||||

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

python -m pip install --upgrade pip

|

||||

pip install flake8 pytest

|

||||

if [ -f requirements.txt ]; then pip install -r requirements.txt; fi

|

||||

|

||||

- name: Lint with flake8

|

||||

run: |

|

||||

# stop the build if there are Python syntax errors or undefined names

|

||||

flake8 . --count --select=E9,F63,F7,F82 --show-source --statistics

|

||||

# exit-zero treats all errors as warnings. The GitHub editor is 127 chars wide

|

||||

flake8 . --count --exit-zero --max-complexity=10 --max-line-length=127 --statistics

|

||||

|

||||

- name: Create release metadata

|

||||

run: |

|

||||

# COPY'ed by Dockerfile into backend/ of the image, then read by the server in store.py

|

||||

echo ${{ github.sha }} > backend/source.txt

|

||||

echo ${{ github.ref }} > backend/tag.txt

|

||||

|

||||

- name: Test with pytest

|

||||

run: |

|

||||

# Each test is totally isolated and performs its own cleanup/reset

|

||||

cd backend; ./run_all_tests.sh

|

||||

|

||||

- name: Set up QEMU

|

||||

uses: docker/setup-qemu-action@v1

|

||||

with:

|

||||

image: tonistiigi/binfmt:latest

|

||||

platforms: all

|

||||

- name: Login to Docker Hub

|

||||

uses: docker/login-action@v1

|

||||

with:

|

||||

username: ${{ secrets.DOCKER_HUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKER_HUB_ACCESS_TOKEN }}

|

||||

|

||||

- name: Set up Docker Buildx

|

||||

id: buildx

|

||||

uses: docker/setup-buildx-action@v1

|

||||

with:

|

||||

install: true

|

||||

version: latest

|

||||

driver-opts: image=moby/buildkit:master

|

||||

|

||||

- name: tag

|

||||

run : echo ${{ github.event.release.tag_name }}

|

||||

|

||||

- name: Build and push tagged version

|

||||

id: docker_build

|

||||

uses: docker/build-push-action@v2

|

||||

with:

|

||||

context: ./

|

||||

file: ./Dockerfile

|

||||

push: true

|

||||

tags: |

|

||||

${{ secrets.DOCKER_HUB_USERNAME }}/changedetection.io:${{ steps.tagName.outputs.tag }}

|

||||

platforms: linux/amd64,linux/arm64,linux/arm/v6,linux/arm/v7

|

||||

cache-from: type=local,src=/tmp/.buildx-cache

|

||||

cache-to: type=local,dest=/tmp/.buildx-cache

|

||||

env:

|

||||

SOURCE_NAME: ${{ steps.branch_name.outputs.SOURCE_NAME }}

|

||||

SOURCE_BRANCH: ${{ steps.branch_name.outputs.SOURCE_BRANCH }}

|

||||

SOURCE_TAG: ${{ steps.branch_name.outputs.SOURCE_TAG }}

|

||||

|

||||

- name: Image digest

|

||||

run: echo step SHA ${{ steps.vars.outputs.sha_short }} tag ${{steps.vars.outputs.tag}} branch ${{steps.vars.outputs.branch}} digest ${{ steps.docker_build.outputs.digest }}

|

||||

88

.github/workflows/image.yml

vendored

@@ -1,88 +0,0 @@

|

||||

name: Test, build and push to Docker Hub

|

||||

|

||||

on:

|

||||

push:

|

||||

branches: [ master, arm-build ]

|

||||

|

||||

jobs:

|

||||

build:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

|

||||

- uses: actions/checkout@v2

|

||||

- name: Set up Python 3.9

|

||||

uses: actions/setup-python@v2

|

||||

with:

|

||||

python-version: 3.9

|

||||

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

python -m pip install --upgrade pip

|

||||

pip install flake8 pytest

|

||||

if [ -f requirements.txt ]; then pip install -r requirements.txt; fi

|

||||

|

||||

- name: Lint with flake8

|

||||

run: |

|

||||

# stop the build if there are Python syntax errors or undefined names

|

||||

flake8 . --count --select=E9,F63,F7,F82 --show-source --statistics

|

||||

# exit-zero treats all errors as warnings. The GitHub editor is 127 chars wide

|

||||

flake8 . --count --exit-zero --max-complexity=10 --max-line-length=127 --statistics

|

||||

|

||||

- name: Create release metadata

|

||||

run: |

|

||||

# COPY'ed by Dockerfile into backend/ of the image, then read by the server in store.py

|

||||

echo ${{ github.sha }} > backend/source.txt

|

||||

echo ${{ github.ref }} > backend/tag.txt

|

||||

|

||||

- name: Test with pytest

|

||||

run: |

|

||||

# Each test is totally isolated and performs its own cleanup/reset

|

||||

cd backend; ./run_all_tests.sh

|

||||

|

||||

- name: Set up QEMU

|

||||

uses: docker/setup-qemu-action@v1

|

||||

with:

|

||||

image: tonistiigi/binfmt:latest

|

||||

platforms: all

|

||||

- name: Login to Docker Hub

|

||||

uses: docker/login-action@v1

|

||||

with:

|

||||

username: ${{ secrets.DOCKER_HUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKER_HUB_ACCESS_TOKEN }}

|

||||

|

||||

- name: Set up Docker Buildx

|

||||

id: buildx

|

||||

uses: docker/setup-buildx-action@v1

|

||||

with:

|

||||

install: true

|

||||

version: latest

|

||||

driver-opts: image=moby/buildkit:master

|

||||

|

||||

- name: Build and push

|

||||

id: docker_build

|

||||

uses: docker/build-push-action@v2

|

||||

with:

|

||||

context: ./

|

||||

file: ./Dockerfile

|

||||

push: true

|

||||

tags: |

|

||||

${{ secrets.DOCKER_HUB_USERNAME }}/changedetection.io:latest

|

||||

# ${{ secrets.DOCKER_HUB_USERNAME }}:/changedetection.io:${{ env.RELEASE_VERSION }}

|

||||

platforms: linux/amd64,linux/arm64,linux/arm/v6,linux/arm/v7

|

||||

# platforms: linux/amd64

|

||||

cache-from: type=local,src=/tmp/.buildx-cache

|

||||

cache-to: type=local,dest=/tmp/.buildx-cache

|

||||

|

||||

- name: Image digest

|

||||

run: echo step SHA ${{ steps.vars.outputs.sha_short }} tag ${{steps.vars.outputs.tag}} branch ${{steps.vars.outputs.branch}} digest ${{ steps.docker_build.outputs.digest }}

|

||||

|

||||

# failed: Cache service responded with 503

|

||||

# - name: Cache Docker layers

|

||||

# uses: actions/cache@v2

|

||||

# with:

|

||||

# path: /tmp/.buildx-cache

|

||||

# key: ${{ runner.os }}-buildx-${{ github.sha }}

|

||||

# restore-keys: |

|

||||

# ${{ runner.os }}-buildx-

|

||||

|

||||

|

||||

44

.github/workflows/pypi.yml

vendored

Normal file

@@ -0,0 +1,44 @@

|

||||

name: PyPi Test and Push tagged release

|

||||

|

||||

# Triggers the workflow on push or pull request events

|

||||

on:

|

||||

workflow_run:

|

||||

workflows: ["ChangeDetection.io Test"]

|

||||

tags: '*.*'

|

||||

types: [completed]

|

||||

|

||||

|

||||

jobs:

|

||||

test-build:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

|

||||

- uses: actions/checkout@v2

|

||||

- name: Set up Python 3.9

|

||||

uses: actions/setup-python@v2

|

||||

with:

|

||||

python-version: 3.9

|

||||

|

||||

# - name: Install dependencies

|

||||

# run: |

|

||||

# python -m pip install --upgrade pip

|

||||

# pip install flake8 pytest

|

||||

# if [ -f requirements.txt ]; then pip install -r requirements.txt; fi

|

||||

# if [ -f requirements-dev.txt ]; then pip install -r requirements-dev.txt; fi

|

||||

|

||||

- name: Test that pip builds without error

|

||||

run: |

|

||||

pip3 --version

|

||||

python3 -m pip install wheel

|

||||

python3 setup.py bdist_wheel

|

||||

python3 -m pip install dist/changedetection.io-*-none-any.whl --force

|

||||

changedetection.io -d /tmp -p 10000 &

|

||||

sleep 3

|

||||

curl http://127.0.0.1:10000/static/styles/pure-min.css >/dev/null

|

||||

killall -9 changedetection.io

|

||||

|

||||

# https://github.com/docker/build-push-action/blob/master/docs/advanced/test-before-push.md ?

|

||||

# https://github.com/docker/buildx/issues/59 ? Needs to be one platform?

|

||||

|

||||

# https://github.com/docker/buildx/issues/495#issuecomment-918925854

|

||||

#if: ${{ github.event_name == 'release'}}

|

||||

18

.github/workflows/test-only.yml

vendored

@@ -4,7 +4,7 @@ name: ChangeDetection.io Test

|

||||

on: [push, pull_request]

|

||||

|

||||

jobs:

|

||||

build:

|

||||

test-build:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

|

||||

@@ -14,20 +14,32 @@ jobs:

|

||||

with:

|

||||

python-version: 3.9

|

||||

|

||||

- name: Show env vars

|

||||

run: set

|

||||

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

python -m pip install --upgrade pip

|

||||

pip install flake8 pytest

|

||||

if [ -f requirements.txt ]; then pip install -r requirements.txt; fi

|

||||

|

||||

if [ -f requirements-dev.txt ]; then pip install -r requirements-dev.txt; fi

|

||||

- name: Lint with flake8

|

||||

run: |

|

||||

# stop the build if there are Python syntax errors or undefined names

|

||||

flake8 . --count --select=E9,F63,F7,F82 --show-source --statistics

|

||||

# exit-zero treats all errors as warnings. The GitHub editor is 127 chars wide

|

||||

flake8 . --count --exit-zero --max-complexity=10 --max-line-length=127 --statistics

|

||||

|

||||

- name: Unit tests

|

||||

run: |

|

||||

python3 -m unittest changedetectionio.tests.unit.test_notification_diff

|

||||

|

||||

- name: Test with pytest

|

||||

run: |

|

||||

# Each test is totally isolated and performs its own cleanup/reset

|

||||

cd backend; ./run_all_tests.sh

|

||||

cd changedetectionio; ./run_all_tests.sh

|

||||

|

||||

# https://github.com/docker/build-push-action/blob/master/docs/advanced/test-before-push.md ?

|

||||

# https://github.com/docker/buildx/issues/59 ? Needs to be one platform?

|

||||

|

||||

# https://github.com/docker/buildx/issues/495#issuecomment-918925854

|

||||

|

||||

6

.gitignore

vendored

@@ -5,3 +5,9 @@ datastore/url-watches.json

|

||||

datastore/*

|

||||

__pycache__

|

||||

.pytest_cache

|

||||

build

|

||||

dist

|

||||

venv

|

||||

test-datastore

|

||||

*.egg-info*

|

||||

.vscode/settings.json

|

||||

|

||||

15

CONTRIBUTING.md

Normal file

@@ -0,0 +1,15 @@

|

||||

Contributing is always welcome!

|

||||

|

||||

I am no professional flask developer, if you know a better way that something can be done, please let me know!

|

||||

|

||||

Otherwise, it's always best to PR into the `dev` branch.

|

||||

|

||||

Please be sure that all new functionality has a matching test!

|

||||

|

||||

Use `pytest` to validate/test, you can run the existing tests as `pytest tests/test_notifications.py` for example

|

||||

|

||||

```

|

||||

pip3 install -r requirements-dev

|

||||

```

|

||||

|

||||

this is from https://github.com/dgtlmoon/changedetection.io/blob/master/requirements-dev.txt

|

||||

14

Dockerfile

@@ -12,7 +12,7 @@ RUN apt-get update && apt-get install -y --no-install-recommends \

|

||||

libxslt-dev \

|

||||

zlib1g-dev \

|

||||

g++

|

||||

|

||||

|

||||

RUN mkdir /install

|

||||

WORKDIR /install

|

||||

|

||||

@@ -20,6 +20,11 @@ COPY requirements.txt /requirements.txt

|

||||

|

||||

RUN pip install --target=/dependencies -r /requirements.txt

|

||||

|

||||

# Playwright is an alternative to Selenium

|

||||

# Excluded this package from requirements.txt to prevent arm/v6 and arm/v7 builds from failing

|

||||

RUN pip install --target=/dependencies playwright~=1.20 \

|

||||

|| echo "WARN: Failed to install Playwright. The application can still run, but the Playwright option will be disabled."

|

||||

|

||||

# Final image stage

|

||||

FROM python:3.8-slim

|

||||

|

||||

@@ -42,12 +47,17 @@ ENV PYTHONUNBUFFERED=1

|

||||

|

||||

RUN [ ! -d "/datastore" ] && mkdir /datastore

|

||||

|

||||

# Re #80, sets SECLEVEL=1 in openssl.conf to allow monitoring sites with weak/old cipher suites

|

||||

RUN sed -i 's/^CipherString = .*/CipherString = DEFAULT@SECLEVEL=1/' /etc/ssl/openssl.cnf

|

||||

|

||||

# Copy modules over to the final image and add their dir to PYTHONPATH

|

||||

COPY --from=builder /dependencies /usr/local

|

||||

ENV PYTHONPATH=/usr/local

|

||||

|

||||

EXPOSE 5000

|

||||

|

||||

# The actual flask app

|

||||

COPY backend /app/backend

|

||||

COPY changedetectionio /app/changedetectionio

|

||||

# The eventlet server wrapper

|

||||

COPY changedetection.py /app/changedetection.py

|

||||

|

||||

|

||||

8

MANIFEST.in

Normal file

@@ -0,0 +1,8 @@

|

||||

recursive-include changedetectionio/api *

|

||||

recursive-include changedetectionio/templates *

|

||||

recursive-include changedetectionio/static *

|

||||

recursive-include changedetectionio/model *

|

||||

include changedetection.py

|

||||

global-exclude *.pyc

|

||||

global-exclude node_modules

|

||||

global-exclude venv

|

||||

1

Procfile

Normal file

@@ -0,0 +1 @@

|

||||

web: python3 ./changedetection.py -C -d ./datastore -p $PORT

|

||||

67

README-pip.md

Normal file

@@ -0,0 +1,67 @@

|

||||

# changedetection.io

|

||||

|

||||

<a href="https://hub.docker.com/r/dgtlmoon/changedetection.io" target="_blank" title="Change detection docker hub">

|

||||

<img src="https://img.shields.io/docker/pulls/dgtlmoon/changedetection.io" alt="Docker Pulls"/>

|

||||

</a>

|

||||

<a href="https://hub.docker.com/r/dgtlmoon/changedetection.io" target="_blank" title="Change detection docker hub">

|

||||

<img src="https://img.shields.io/github/v/release/dgtlmoon/changedetection.io" alt="Change detection latest tag version"/>

|

||||

</a>

|

||||

|

||||

## Self-hosted open source change monitoring of web pages.

|

||||

|

||||

_Know when web pages change! Stay ontop of new information!_

|

||||

|

||||

Live your data-life *pro-actively* instead of *re-actively*, do not rely on manipulative social media for consuming important information.

|

||||

|

||||

|

||||

<img src="https://raw.githubusercontent.com/dgtlmoon/changedetection.io/master/screenshot.png" style="max-width:100%;" alt="Self-hosted web page change monitoring" title="Self-hosted web page change monitoring" />

|

||||

|

||||

|

||||

**Get your own private instance now! Let us host it for you!**

|

||||

|

||||

[**Try our $6.99/month subscription - unlimited checks, watches and notifications!**](https://lemonade.changedetection.io/start), choose from different geographical locations, let us handle everything for you.

|

||||

|

||||

|

||||

|

||||

#### Example use cases

|

||||

|

||||

Know when ...

|

||||

|

||||

- Government department updates (changes are often only on their websites)

|

||||

- Local government news (changes are often only on their websites)

|

||||

- New software releases, security advisories when you're not on their mailing list.

|

||||

- Festivals with changes

|

||||

- Realestate listing changes

|

||||

- COVID related news from government websites

|

||||

- Detect and monitor changes in JSON API responses

|

||||

- API monitoring and alerting

|

||||

|

||||

**Get monitoring now!**

|

||||

|

||||

```bash

|

||||

$ pip3 install changedetection.io

|

||||

```

|

||||

|

||||

Specify a target for the *datastore path* with `-d` (required) and a *listening port* with `-p` (defaults to `5000`)

|

||||

|

||||

```bash

|

||||

$ changedetection.io -d /path/to/empty/data/dir -p 5000

|

||||

```

|

||||

|

||||

|

||||

Then visit http://127.0.0.1:5000 , You should now be able to access the UI.

|

||||

|

||||

### Features

|

||||

- Website monitoring

|

||||

- Change detection of content and analyses

|

||||

- Filters on change (Select by CSS or JSON)

|

||||

- Triggers (Wait for text, wait for regex)

|

||||

- Notification support

|

||||

- JSON API Monitoring

|

||||

- Parse JSON embedded in HTML

|

||||

- (Reverse) Proxy support

|

||||

- Javascript support via WebDriver

|

||||

- RaspberriPi (arm v6/v7/64 support)

|

||||

|

||||

See https://github.com/dgtlmoon/changedetection.io for more information.

|

||||

|

||||

183

README.md

@@ -1,74 +1,122 @@

|

||||

# changedetection.io

|

||||

|

||||

<a href="https://hub.docker.com/r/dgtlmoon/changedetection.io" target="_blank" title="Change detection docker hub">

|

||||

<img src="https://img.shields.io/docker/pulls/dgtlmoon/changedetection.io" alt="Docker Pulls"/>

|

||||

</a>

|

||||

<a href="https://hub.docker.com/r/dgtlmoon/changedetection.io" target="_blank" title="Change detection docker hub">

|

||||

<img src="https://img.shields.io/github/v/release/dgtlmoon/changedetection.io" alt="Change detection latest tag version"/>

|

||||

</a>

|

||||

[![Release Version][release-shield]][release-link] [![Docker Pulls][docker-pulls]][docker-link] [![License][license-shield]](LICENSE.md)

|

||||

|

||||

## Self-hosted change monitoring of web pages.

|

||||

|

||||

|

||||

## Self-Hosted, Open Source, Change Monitoring of Web Pages

|

||||

|

||||

_Know when web pages change! Stay ontop of new information!_

|

||||

|

||||

Live your data-life *pro-actively* instead of *re-actively*, do not rely on manipulative social media for consuming important information.

|

||||

Live your data-life *pro-actively* instead of *re-actively*.

|

||||

|

||||

Free, Open-source web page monitoring, notification and change detection. Don't have time? [**Try our $6.99/month subscription - unlimited checks and watches!**](https://lemonade.changedetection.io/start)

|

||||

|

||||

|

||||

<img src="https://raw.githubusercontent.com/dgtlmoon/changedetection.io/master/screenshot.png" style="max-width:100%;" alt="Self-hosted web page change monitoring" title="Self-hosted web page change monitoring" />

|

||||

[<img src="https://raw.githubusercontent.com/dgtlmoon/changedetection.io/master/docs/screenshot.png" style="max-width:100%;" alt="Self-hosted web page change monitoring" title="Self-hosted web page change monitoring" />](https://lemonade.changedetection.io/start)

|

||||

|

||||

|

||||

**Get your own private instance now! Let us host it for you!**

|

||||

|

||||

[**Try our $6.99/month subscription - unlimited checks and watches!**](https://lemonade.changedetection.io/start) , _half the price of other website change monitoring services and comes with unlimited watches & checks!_

|

||||

|

||||

|

||||

|

||||

- Automatic Updates, Automatic Backups, No Heroku "paused application", don't miss a change!

|

||||

- Javascript browser included

|

||||

- Unlimited checks and watches!

|

||||

|

||||

|

||||

#### Example use cases

|

||||

|

||||

Know when ...

|

||||

|

||||

- Government department updates (changes are often only on their websites)

|

||||

- Local government news (changes are often only on their websites)

|

||||

- Products and services have a change in pricing

|

||||

- Governmental department updates (changes are often only on their websites)

|

||||

- New software releases, security advisories when you're not on their mailing list.

|

||||

- Festivals with changes

|

||||

- Realestate listing changes

|

||||

- COVID related news from government websites

|

||||

- University/organisation news from their website

|

||||

- Detect and monitor changes in JSON API responses

|

||||

- API monitoring and alerting

|

||||

- JSON API monitoring and alerting

|

||||

- Changes in legal and other documents

|

||||

- Trigger API calls via notifications when text appears on a website

|

||||

- Glue together APIs using the JSON filter and JSON notifications

|

||||

- Create RSS feeds based on changes in web content

|

||||

- Monitor HTML source code for unexpected changes, strengthen your PCI compliance

|

||||

- You have a very sensitive list of URLs to watch and you do _not_ want to use the paid alternatives. (Remember, _you_ are the product)

|

||||

|

||||

_Need an actual Chrome runner with Javascript support? see the experimental <a href="https://github.com/dgtlmoon/changedetection.io/tree/javascript-browser">Javascript/Chrome support changedetection.io branch!</a>_

|

||||

_Need an actual Chrome runner with Javascript support? We support fetching via WebDriver!</a>_

|

||||

|

||||

**Get monitoring now! super simple, one command!**

|

||||

Run the python code on your own machine by cloning this repository, or with <a href="https://docs.docker.com/get-docker/">docker</a> and/or <a href="https://www.digitalocean.com/community/tutorial_collections/how-to-install-docker-compose">docker-compose</a>

|

||||

## Screenshots

|

||||

|

||||

With one docker-compose command

|

||||

### Examine differences in content.

|

||||

|

||||

Easily see what changed, examine by word, line, or individual character.

|

||||

|

||||

<img src="https://raw.githubusercontent.com/dgtlmoon/changedetection.io/master/docs/screenshot-diff.png" style="max-width:100%;" alt="Self-hosted web page change monitoring context difference " title="Self-hosted web page change monitoring context difference " />

|

||||

|

||||

Please :star: star :star: this project and help it grow! https://github.com/dgtlmoon/changedetection.io/

|

||||

|

||||

### Target elements with the Visual Selector tool.

|

||||

|

||||

Available when connected to a <a href="https://github.com/dgtlmoon/changedetection.io/wiki/Playwright-content-fetcher">playwright content fetcher</a> (available also as part of our subscription service)

|

||||

|

||||

<img src="https://raw.githubusercontent.com/dgtlmoon/changedetection.io/master/docs/visualselector-anim.gif" style="max-width:100%;" alt="Self-hosted web page change monitoring context difference " title="Self-hosted web page change monitoring context difference " />

|

||||

|

||||

## Installation

|

||||

|

||||

### Docker

|

||||

|

||||

With Docker composer, just clone this repository and..

|

||||

```bash

|

||||

docker-compose up -d

|

||||

$ docker-compose up -d

|

||||

```

|

||||

Docker standalone

|

||||

```bash

|

||||

$ docker run -d --restart always -p "127.0.0.1:5000:5000" -v datastore-volume:/datastore --name changedetection.io dgtlmoon/changedetection.io

|

||||

```

|

||||

|

||||

or

|

||||

### Windows

|

||||

|

||||

See the install instructions at the wiki https://github.com/dgtlmoon/changedetection.io/wiki/Microsoft-Windows

|

||||

|

||||

### Python Pip

|

||||

|

||||

Check out our pypi page https://pypi.org/project/changedetection.io/

|

||||

|

||||

```bash

|

||||

docker run -d --restart always -p "127.0.0.1:5000:5000" -v datastore-volume:/datastore --name changedetection.io dgtlmoon/changedetection.io

|

||||

```

|

||||

$ pip3 install changedetection.io

|

||||

$ changedetection.io -d /path/to/empty/data/dir -p 5000

|

||||

```

|

||||

|

||||

Now visit http://127.0.0.1:5000 , You should now be able to access the UI.

|

||||

Then visit http://127.0.0.1:5000 , You should now be able to access the UI.

|

||||

|

||||

#### Updating to latest version

|

||||

_Now with per-site configurable support for using a fast built in HTTP fetcher or use a Chrome based fetcher for monitoring of JavaScript websites!_

|

||||

|

||||

Highly recommended :)

|

||||

## Updating changedetection.io

|

||||

|

||||

```bash

|

||||

### Docker

|

||||

```

|

||||

docker pull dgtlmoon/changedetection.io

|

||||

docker kill $(docker ps -a|grep changedetection.io|awk '{print $1}')

|

||||

docker rm $(docker ps -a|grep changedetection.io|awk '{print $1}')

|

||||

docker run -d --restart always -p "127.0.0.1:5000:5000" -v datastore-volume:/datastore --name changedetection.io dgtlmoon/changedetection.io

|

||||

```

|

||||

|

||||

### Screenshots

|

||||

|

||||

Examining differences in content.

|

||||

### docker-compose

|

||||

|

||||

<img src="https://raw.githubusercontent.com/dgtlmoon/changedetection.io/master/screenshot-diff.png" style="max-width:100%;" alt="Self-hosted web page change monitoring context difference " title="Self-hosted web page change monitoring context difference " />

|

||||

```bash

|

||||

docker-compose pull && docker-compose up -d

|

||||

```

|

||||

|

||||

Please :star: star :star: this project and help it grow! https://github.com/dgtlmoon/changedetection.io/

|

||||

See the wiki for more information https://github.com/dgtlmoon/changedetection.io/wiki

|

||||

|

||||

### Notifications

|

||||

|

||||

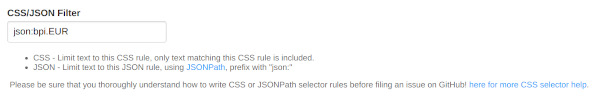

## Filters

|

||||

XPath, JSONPath and CSS support comes baked in! You can be as specific as you need, use XPath exported from various XPath element query creation tools.

|

||||

|

||||

(We support LXML re:test, re:math and re:replace.)

|

||||

|

||||

## Notifications

|

||||

|

||||

ChangeDetection.io supports a massive amount of notifications (including email, office365, custom APIs, etc) when a web-page has a change detected thanks to the <a href="https://github.com/caronc/apprise">apprise</a> library.

|

||||

Simply set one or more notification URL's in the _[edit]_ tab of that watch.

|

||||

@@ -86,60 +134,67 @@ Just some examples

|

||||

json://someserver.com/custom-api

|

||||

syslog://

|

||||

|

||||

<a href="https://github.com/caronc/apprise">And everything else in this list!</a>

|

||||

<a href="https://github.com/caronc/apprise#popular-notification-services">And everything else in this list!</a>

|

||||

|

||||

<img src="https://raw.githubusercontent.com/dgtlmoon/changedetection.io/master/screenshot-notifications.png" style="max-width:100%;" alt="Self-hosted web page change monitoring notifications" title="Self-hosted web page change monitoring notifications" />

|

||||

<img src="https://raw.githubusercontent.com/dgtlmoon/changedetection.io/master/docs/screenshot-notifications.png" style="max-width:100%;" alt="Self-hosted web page change monitoring notifications" title="Self-hosted web page change monitoring notifications" />

|

||||

|

||||

Now you can also customise your notification content!

|

||||

|

||||

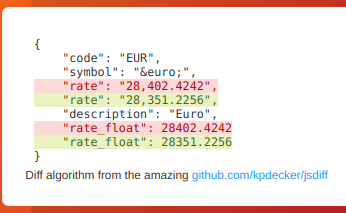

### JSON API Monitoring

|

||||

## JSON API Monitoring

|

||||

|

||||

Detect changes and monitor data in JSON API's by using the built-in JSONPath selectors as a filter / selector.

|

||||

|

||||

|

||||

|

||||

|

||||

This will re-parse the JSON and apply formatting to the text, making it super easy to monitor and detect changes in JSON API results

|

||||

|

||||

|

||||

|

||||

|

||||

### Proxy

|

||||

### Parse JSON embedded in HTML!

|

||||

|

||||

A proxy for ChangeDetection.io can be configured by setting environment the

|

||||

`HTTP_PROXY`, `HTTPS_PROXY` variables, examples are also in the `docker-compose.yml`

|

||||

|

||||

`NO_PROXY` exclude list can be specified by following `"localhost,192.168.0.0/24"`

|

||||

|

||||

as `docker run` with `-e`

|

||||

When you enable a `json:` filter, you can even automatically extract and parse embedded JSON inside a HTML page! Amazingly handy for sites that build content based on JSON, such as many e-commerce websites.

|

||||

|

||||

```

|

||||

docker run -d --restart always -e HTTPS_PROXY="socks5h://10.10.1.10:1080" -p "127.0.0.1:5000:5000" -v datastore-volume:/datastore --name changedetection.io dgtlmoon/changedetection.io

|

||||

```

|

||||

<html>

|

||||

...

|

||||

<script type="application/ld+json">

|

||||

{"@context":"http://schema.org","@type":"Product","name":"Nan Optipro Stage 1 Baby Formula 800g","price": 23.50 }

|

||||

</script>

|

||||

```

|

||||

|

||||

With `docker-compose`, see the `Proxy support example` in <a href="https://github.com/dgtlmoon/changedetection.io/blob/master/docker-compose.yml">docker-compose.yml</a>.

|

||||

`json:$.price` would give `23.50`, or you can extract the whole structure

|

||||

|

||||

For more information see https://docs.python-requests.org/en/master/user/advanced/#proxies

|

||||

## Proxy configuration

|

||||

|

||||

This proxy support also extends to the notifications https://github.com/caronc/apprise/issues/387#issuecomment-841718867

|

||||

See the wiki https://github.com/dgtlmoon/changedetection.io/wiki/Proxy-configuration

|

||||

|

||||

### Notes

|

||||

## Raspberry Pi support?

|

||||

|

||||

- ~~Does not yet support Javascript~~

|

||||

- ~~Wont work with Cloudfare type "Please turn on javascript" protected pages~~

|

||||

- You can use the 'headers' section to monitor password protected web page changes

|

||||

|

||||

See the experimental <a href="https://github.com/dgtlmoon/changedetection.io/tree/javascript-browser">Javascript/Chrome browser support!</a>

|

||||

|

||||

### RaspberriPi support?

|

||||

|

||||

RaspberriPi and linux/arm/v6 linux/arm/v7 arm64 devices are supported!

|

||||

Raspberry Pi and linux/arm/v6 linux/arm/v7 arm64 devices are supported! See the wiki for [details](https://github.com/dgtlmoon/changedetection.io/wiki/Fetching-pages-with-WebDriver)

|

||||

|

||||

|

||||

### Support us

|

||||

## Support us

|

||||

|

||||

Do you use changedetection.io to make money? does it save you time or money? Does it make your life easier? less stressful? Remember, we write this software when we should be doing actual paid work, we have to buy food and pay rent just like you.

|

||||

|

||||

Please support us, even small amounts help a LOT.

|

||||

|

||||

BTC `1PLFN327GyUarpJd7nVe7Reqg9qHx5frNn`

|

||||

Firstly, consider taking out a [change detection monthly subscription - unlimited checks and watches](https://lemonade.changedetection.io/start) , even if you don't use it, you still get the warm fuzzy feeling of helping out the project. (And who knows, you might just use it!)

|

||||

|

||||

<img src="https://raw.githubusercontent.com/dgtlmoon/changedetection.io/master/btc-support.png" style="max-width:50%;" alt="Support us!" />

|

||||

Or directly donate an amount PayPal [](https://www.paypal.com/donate/?hosted_button_id=7CP6HR9ZCNDYJ)

|

||||

|

||||

Or BTC `1PLFN327GyUarpJd7nVe7Reqg9qHx5frNn`

|

||||

|

||||

<img src="https://raw.githubusercontent.com/dgtlmoon/changedetection.io/master/docs/btc-support.png" style="max-width:50%;" alt="Support us!" />

|

||||

|

||||

## Commercial Support

|

||||

|

||||

I offer commercial support, this software is depended on by network security, aerospace , data-science and data-journalist professionals just to name a few, please reach out at dgtlmoon@gmail.com for any enquiries, I am more than glad to work with your organisation to further the possibilities of what can be done with changedetection.io

|

||||

|

||||

|

||||

[release-shield]: https://img.shields.io:/github/v/release/dgtlmoon/changedetection.io?style=for-the-badge

|

||||

[docker-pulls]: https://img.shields.io/docker/pulls/dgtlmoon/changedetection.io?style=for-the-badge

|

||||

[test-shield]: https://github.com/dgtlmoon/changedetection.io/actions/workflows/test-only.yml/badge.svg?branch=master

|

||||

|

||||

[license-shield]: https://img.shields.io/github/license/dgtlmoon/changedetection.io.svg?style=for-the-badge

|

||||

[release-link]: https://github.com/dgtlmoon.com/changedetection.io/releases

|

||||

[docker-link]: https://hub.docker.com/r/dgtlmoon/changedetection.io

|

||||

|

||||

21

app.json

Normal file

@@ -0,0 +1,21 @@

|

||||

{

|

||||

"name": "ChangeDetection.io",

|

||||

"description": "The best and simplest self-hosted open source website change detection monitoring and notification service.",

|

||||

"keywords": [

|

||||

"changedetection",

|

||||

"website monitoring"

|

||||

],

|

||||

"repository": "https://github.com/dgtlmoon/changedetection.io",

|

||||

"success_url": "/",

|

||||

"scripts": {

|

||||

},

|

||||

"env": {

|

||||

},

|

||||

"formation": {

|

||||

"web": {

|

||||

"quantity": 1,

|

||||

"size": "free"

|

||||

}

|

||||

},

|

||||

"image": "heroku/python"

|

||||

}

|

||||

@@ -1,880 +0,0 @@

|

||||

#!/usr/bin/python3

|

||||

|

||||

|

||||

# @todo logging

|

||||

# @todo extra options for url like , verify=False etc.

|

||||

# @todo enable https://urllib3.readthedocs.io/en/latest/user-guide.html#ssl as option?

|

||||

# @todo option for interval day/6 hour/etc

|

||||

# @todo on change detected, config for calling some API

|

||||

# @todo fetch title into json

|

||||

# https://distill.io/features

|

||||

# proxy per check

|

||||

# - flask_cors, itsdangerous,MarkupSafe

|

||||

|

||||

import time

|

||||

import os

|

||||

import timeago

|

||||

import flask_login

|

||||

from flask_login import login_required

|

||||

|

||||

import threading

|

||||

from threading import Event

|

||||

|

||||

import queue

|

||||

|

||||

from flask import Flask, render_template, request, send_from_directory, abort, redirect, url_for, flash

|

||||

|

||||

from feedgen.feed import FeedGenerator

|

||||

from flask import make_response

|

||||

import datetime

|

||||

import pytz

|

||||

|

||||

datastore = None

|

||||

|

||||

# Local

|

||||

running_update_threads = []

|

||||

ticker_thread = None

|

||||

|

||||

extra_stylesheets = []

|

||||

|

||||

update_q = queue.Queue()

|

||||

|

||||

notification_q = queue.Queue()

|

||||

|

||||

app = Flask(__name__, static_url_path="/var/www/change-detection/backend/static")

|

||||

|

||||

# Stop browser caching of assets

|

||||

app.config['SEND_FILE_MAX_AGE_DEFAULT'] = 0

|

||||

|

||||

app.config.exit = Event()

|

||||

|

||||

app.config['NEW_VERSION_AVAILABLE'] = False

|

||||

|

||||

app.config['LOGIN_DISABLED'] = False

|

||||

|

||||

#app.config["EXPLAIN_TEMPLATE_LOADING"] = True

|

||||

|

||||

# Disables caching of the templates

|

||||

app.config['TEMPLATES_AUTO_RELOAD'] = True

|

||||

|

||||

|

||||

def init_app_secret(datastore_path):

|

||||

secret = ""

|

||||

|

||||

path = "{}/secret.txt".format(datastore_path)

|

||||

|

||||

try:

|

||||

with open(path, "r") as f:

|

||||

secret = f.read()

|

||||

|

||||

except FileNotFoundError:

|

||||

import secrets

|

||||

with open(path, "w") as f:

|

||||

secret = secrets.token_hex(32)

|

||||

f.write(secret)

|

||||

|

||||

return secret

|

||||

|

||||

# Remember python is by reference

|

||||

# populate_form in wtfors didnt work for me. (try using a setattr() obj type on datastore.watch?)

|

||||

def populate_form_from_watch(form, watch):

|

||||

for i in form.__dict__.keys():

|

||||

if i[0] != '_':

|

||||

p = getattr(form, i)

|

||||

if hasattr(p, 'data') and i in watch:

|

||||

if not p.data:

|

||||

setattr(p, "data", watch[i])

|

||||

|

||||

|

||||

# We use the whole watch object from the store/JSON so we can see if there's some related status in terms of a thread

|

||||

# running or something similar.

|

||||

@app.template_filter('format_last_checked_time')

|

||||

def _jinja2_filter_datetime(watch_obj, format="%Y-%m-%d %H:%M:%S"):

|

||||

# Worker thread tells us which UUID it is currently processing.

|

||||

for t in running_update_threads:

|

||||

if t.current_uuid == watch_obj['uuid']:

|

||||

return "Checking now.."

|

||||

|

||||

if watch_obj['last_checked'] == 0:

|

||||

return 'Not yet'

|

||||

|

||||

return timeago.format(int(watch_obj['last_checked']), time.time())

|

||||

|

||||

|

||||

# @app.context_processor

|

||||

# def timeago():

|

||||

# def _timeago(lower_time, now):

|

||||

# return timeago.format(lower_time, now)

|

||||

# return dict(timeago=_timeago)

|

||||

|

||||

@app.template_filter('format_timestamp_timeago')

|

||||

def _jinja2_filter_datetimestamp(timestamp, format="%Y-%m-%d %H:%M:%S"):

|

||||

return timeago.format(timestamp, time.time())

|

||||

# return timeago.format(timestamp, time.time())

|

||||

# return datetime.datetime.utcfromtimestamp(timestamp).strftime(format)

|

||||

|

||||

|

||||

class User(flask_login.UserMixin):

|

||||

id=None

|

||||

|

||||

def set_password(self, password):

|

||||

return True

|

||||

def get_user(self, email="defaultuser@changedetection.io"):

|

||||

return self

|

||||

def is_authenticated(self):

|

||||

|

||||

return True

|

||||

def is_active(self):

|

||||

return True

|

||||

def is_anonymous(self):

|

||||

return False

|

||||

def get_id(self):

|

||||

return str(self.id)

|

||||

|

||||

def check_password(self, password):

|

||||

|

||||

import hashlib

|

||||

import base64

|

||||

|

||||

# Getting the values back out

|

||||

raw_salt_pass = base64.b64decode(datastore.data['settings']['application']['password'])

|

||||

salt_from_storage = raw_salt_pass[:32] # 32 is the length of the salt

|

||||

|

||||

# Use the exact same setup you used to generate the key, but this time put in the password to check

|

||||

new_key = hashlib.pbkdf2_hmac(

|

||||

'sha256',

|

||||

password.encode('utf-8'), # Convert the password to bytes

|

||||

salt_from_storage,

|

||||

100000

|

||||

)

|

||||

new_key = salt_from_storage + new_key

|

||||

|

||||

return new_key == raw_salt_pass

|

||||

|

||||

pass

|

||||

|

||||

def changedetection_app(config=None, datastore_o=None):

|

||||

global datastore

|

||||

datastore = datastore_o

|

||||

|

||||

app.config.update(dict(DEBUG=True))

|

||||

#app.config.update(config or {})

|

||||

|

||||

login_manager = flask_login.LoginManager(app)

|

||||

login_manager.login_view = 'login'

|

||||

app.secret_key = init_app_secret(config['datastore_path'])

|

||||

|

||||

# Setup cors headers to allow all domains

|

||||

# https://flask-cors.readthedocs.io/en/latest/

|

||||

# CORS(app)

|

||||

|

||||

@login_manager.user_loader

|

||||

def user_loader(email):

|

||||

user = User()

|

||||

user.get_user(email)

|

||||

return user

|

||||

|

||||

@login_manager.unauthorized_handler

|

||||

def unauthorized_handler():

|

||||

# @todo validate its a URL of this host and use that

|

||||

return redirect(url_for('login', next=url_for('index')))

|

||||

|

||||

@app.route('/logout')

|

||||

def logout():

|

||||

flask_login.logout_user()

|

||||

return redirect(url_for('index'))

|

||||

|

||||

# https://github.com/pallets/flask/blob/93dd1709d05a1cf0e886df6223377bdab3b077fb/examples/tutorial/flaskr/__init__.py#L39

|

||||

# You can divide up the stuff like this